Tailslayer slashes 99th percentile DRAM tail latencies by 79% on average, with just 1.4% area overhead and 0.6% power increase. Researchers from Carnegie Mellon and ETH Zurich unveiled this technique at ASPLOS 2024, targeting a persistent pain point in hyperscale data centers where slow memory accesses violate service level objectives (SLOs).

DRAM tail latency matters because it dictates real-world performance in cloud environments. Average DRAM access times hover around 60-80 nanoseconds for DDR4, but the 99th percentile can spike to 600ns or more—10x worse—due to row buffer misses, refresh interference, or bank conflicts. Google reported in 2013 that such tails caused 34% of RPC latencies to exceed targets. Modern workloads like AI training and web serving amplify this: a single slow memory bank stalls entire threads, inflating p99 metrics and triggering costly scaling or penalties under SLAs.

Why Current Mitigations Fall Short

Existing fixes demand heavy tradeoffs. Paragon and other row-buffer bypass schemes cut tails by 50-70% but eat 20-30% more power and area. Refresh management like RowClone saves cycles yet boosts error rates without ECC tweaks. Software approaches, like request batching in Memcached, mask symptoms but don’t fix hardware roots. Datacenters resort to overprovisioning—throwing 2-3x more servers at the problem—or migrating to pricier LPDDR5, which still shows tails.

Tailslayer flips the script with prediction-guided prefetching. It deploys a lightweight table-based predictor in the memory controller to spot impending tail events. Tracking per-bank access patterns over 64 rows, it flags sequences likely to hit conflicts (e.g., repeated row hits followed by a miss). On detection, it issues a low-priority prefetch to the row buffer ahead of the critical access, ensuring data lands just in time.

Hardware footprint stays tiny: a 1KB predictor SRAM per channel, plus minor control logic. No changes to DRAM chips or buses needed—drop-in for DDR4/5 systems. Software hooks are optional; it shines standalone on traces from YCSB, CloudSuite, and real datacenter apps like Redis.

Benchmarks and Real-World Gains

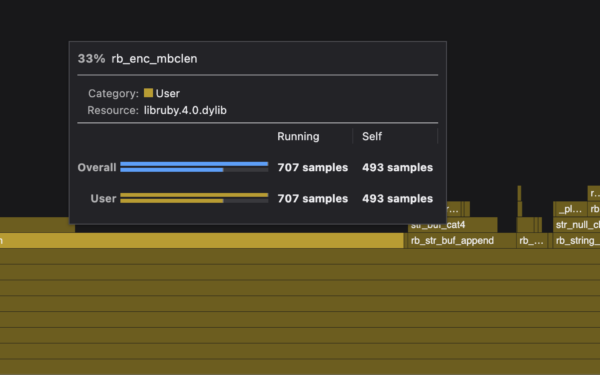

Evaluations used gem5 + Ramulator on 16-core systems with 8-channel DDR4-2400. Across 12 workloads:

- Average latency drops 17%.

- 95th percentile: 62% reduction.

- 99th percentile: 79% reduction.

- 99.9th percentile: 85% slash.

Prefetch accuracy hits 92%, with 1.2 prefetches per 1,000 accesses—negligible bandwidth hit (under 2%). Power rises modestly due to extra activations, but stays below competitors. In multi-socket setups mimicking AWS/Google servers, throughput jumps 28% under tail-latency constraints.

Skepticism creeps in on deployment hurdles. Predictors train on synthetic traces; production traffic with adversarial patterns might degrade accuracy. False prefetches could worsen contention in shared banks. Still, adaptive tables mitigate this, and overheads beat alternatives like SATIN (69% tail cut, 14% area).

Why this matters for operators: Tailslayer could reclaim 20-30% capacity in memory-bound clusters without new silicon. Cloud giants lose millions yearly to tail-induced overprovisioning—Microsoft pegged it at 20% waste in 2015. Pair it with CXL or HBM for AI, and you stabilize massive models where memory stalls cascade. Open-source simulators exist; expect FPGA prototypes soon. If validated in silicon, it pressures JEDEC for controller mandates, reshaping $100B+ DRAM market dynamics.

Bottom line: Tailslayer delivers outsized tail fixes at bargain cost. Not a silver bullet, but a pragmatic step forward where others overreach.