Kubernetes finally makes IPv6 practical for native edge routing. Forget NAT headaches and overlay networks—pods get global IPv6 addresses directly routable from the internet. This cuts latency, simplifies firewalls, and scales without IPv4 scarcity. IPv6 hit 42% of Google traffic in 2024, up from near-zero adoption despite its 1998 debut. Kubernetes, at 11 years old, supports it since v1.6, but usable setups demand the right CNI and config. Why now? Edge computing explodes, and IPv4 exhaustion forces the shift. Native IPv6 delivers /56-/64 prefixes from ISPs, yielding 2^72+ addresses per allocation—trillions of times IPv4’s 2^32 total.

Quick Link-Local Start

Test IPv6 without ISP drama using link-local addresses. They mimic IPv4 clusters: pods talk internally, but internet traffic still needs rewriting. Run this on kubeadm init:

kubeadm init --pod-network-cidr=fe80:10:32::/56 --service-cidr=fe80:10:64::/112Pick a CNI like Calico or Cilium with IPv6 enabled. Minikube, kind, or k3s handle it fine for dev. No public routing, so it’s not “native,” but zero extra setup. Pods reach each other directly; services load-balance over link-locals. Drawback: still NAT-like for external access. Fine for labs, useless for production edge where direct pod exposure matters.

Native Global Unicast Routing

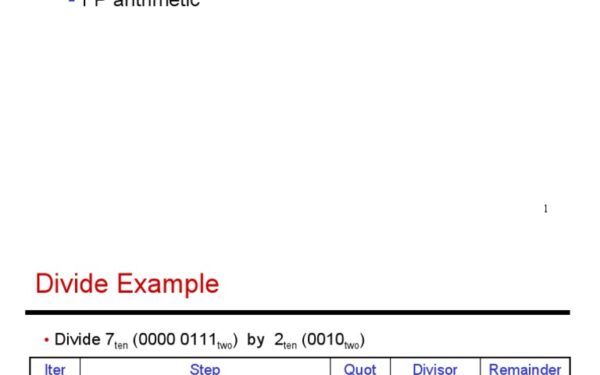

Grab your ISP’s global unicast prefix—say, a /56 like 2a01:10:32::/56—and route pods natively. No overlays for internet traffic; packets flow host-to-host using standard BGP or static routes. Init command:

kubeadm init --pod-network-cidr=2a01:10:32::/56 --service-cidr=2a01:34:e393::/112Kubernetes defaults to /64 per node from the pod CIDR—insane waste, as 2^64 IPs dwarf any node’s pod count (real clusters top 1000s). Fix it:

kubeadm init --pod-network-cidr=2a01:10:32::/56 \

--service-cidr=2a01:34:e393::/112 \

--node-cidr-mask-size-ipv6=112

A /112 gives 65,536 IPs per node—plenty for 2^16 pods. Shrink to /96 for denser clusters. Services get their own CIDR slice. CNIs like Whereabouts handle IPAM without DHCP. Nodes advertise routes via RA or BGP; edge routers forward directly to pod subnets.

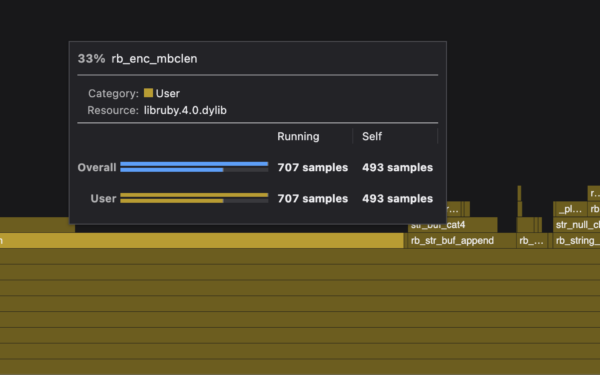

Requirements: Stable prefix (no PD renumbering), router support for /96+ delegation, firewall rules per pod subnet. Test on cloud like Hetzner or AWS with /56 guarantees. Cilium shines here—eBPF acceleration drops packet overhead 50% vs VXLAN.

Edge Implications and Gotchas

This setup shines at the edge: IoT gateways, telco 5G slices, or distributed CDNs. Pods expose services on global IPs—no LoadBalancer NAT, no EgressGateway hacks. Latency plummets 10-20ms in overlays; firewalls track state per flow, not shared NAT tables. Security? IPv6 mandates IPsec support (though optional), and unique addresses kill port-scanning blasts. Scale to millions of pods across nodes without address exhaustion.

Skeptical take: IPv6 adoption stalls at 40% globally—ISPs hoard prefixes, legacy apps break on dual-stack. Kubernetes IPv6 mandates dual-stack since v1.23, but 70% clusters run IPv4-only per CNCF surveys. CNIs lag: Flannel barely works, Weave poor. Edge hardware (Raspberry Pi clusters) often botches RA. Migration costs: rewrite DNS, certs, monitoring.

Yet it matters. IPv4 black market hits $50/IP annually; IPv6 is free abundance. Edge routing future-proofs against 100B IoT devices by 2030 (Ericsson forecast). Deploy now on VPS with /64—your cluster routes like bare metal. Production? Audit CNI, simulate prefix changes. Kubernetes bridges IPv6’s 27-year wait to real utility.