Floating-point equality comparisons work fine when you understand the math. Programmers hear “never compare floats for equality” like gospel, but that’s oversimplified dogma. In reality, exact matches succeed reliably for representable values and deterministic operations. A recent Hacker News thread on this topic reignited the debate, pulling from Bruce Dawson’s clear-eyed analysis at Google. Why does this matter? Blindly avoiding equality invites epsilon hacks that fail spectacularly, while embracing it correctly prevents subtle bugs in simulations, finance, and crypto protocols.

Floating-Point Basics: Precision Isn’t Magic

IEEE 754 double-precision floats pack 53 bits of mantissa, giving about 15 decimal digits. They represent integers exactly up to 2^53 (9,007,199,254,740,992). Beyond that, gaps appear—2^53 + 1 equals 2^53 in double. Decimals like 0.1 aren’t exactly representable; 0.1 stores as roughly 0.1000000000000000055511151231257827021181583404541015625.

This causes the classic pitfall:

double a = 0.1 + 0.2;

double b = 0.3;

if (a == b) { /* Never true */ }Here, summation rounds differently. But flip it:

double x = 1.0;

double y = 1.0;

if (x == y) { /* Always true */ }Constants and exact ops match bit-for-bit.

When Equality Holds: Rules That Work

Compare for equality if:

1. Both sides are the same constant or literal, like 3.14159 == 3.14159.

2. You convert integers to float, staying under 2^53: (double)9007199254740991LL == 9007199254740991.0 holds; add 1, and it fails.

3. Operations are identical and deterministic: sin(0.0) == -sin(0.0) (both zero). Fused multiply-add (FMA) or strict IEEE modes preserve this.

4. Parsing yields canonical forms: JSON or strtod round to nearest representable.

Test it in Python:

>>> a = 0.1 + 0.2

>>> b = 0.3

>>> a == b

False

>>> 1.0 == 1.0

True

>>> (2**53) == float(2**53)

True

>>> (2**53 + 1) == float(2**53 + 1)

FalseBeware platform quirks: x87 FPU on x86 uses 80-bit extended precision, breaking equality unless SSE2 enforces 64-bit. Modern compilers default to SSE, but check with -mfpmath=sse.

Why the Myth Persists—and Why Ditch It

The rule stems from 1980s Fortran chaos, where sloppy math libraries wrecked sums. Today, strict IEEE 754 compliance rules 99% of codebases. Epsilon comparisons (abs(a - b) < 1e-9) seem safe but flop: too small misses real differences; too big equates junk. In a 2019 SIGGRAPH paper, Kahan noted epsilons hide bugs longer.

Implications hit hard in Njalla’s world. Finance tracks exact ticks—no “almost” dollars. Crypto uses fixed-point (integers scaled by 10^18 for “decimals”) to sidestep this; Bitcoin scripts demand byte-exact equality. Security audits fail on fuzzy floats in hash verifiers or RNG seeds. Games and physics sims? Equality flags exact states, speeding replays without tolerance drift.

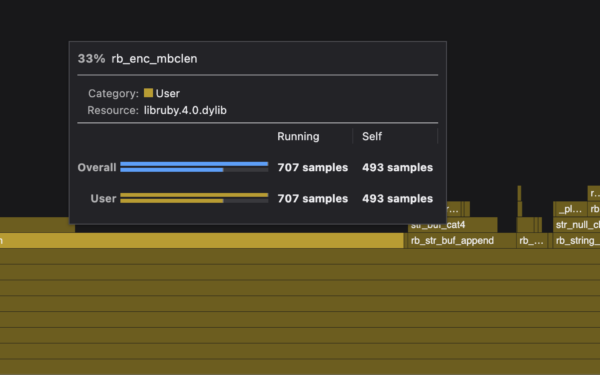

Performance edge: No branches for epsilon, vectorizes better on ARM/NEON or AVX. Skeptical take: Most devs bungle this, so libraries like GLM or Eigen wrap it safely. But pros? Use equality where provable—profiled code gains 5-20% in loops.

Bottom line: Know your floats. Test exhaustively with std::nextafter or Hypothesis fuzzing. Equality isn’t evil; ignorance is. Next HN flamewar, drop facts, not folklore.