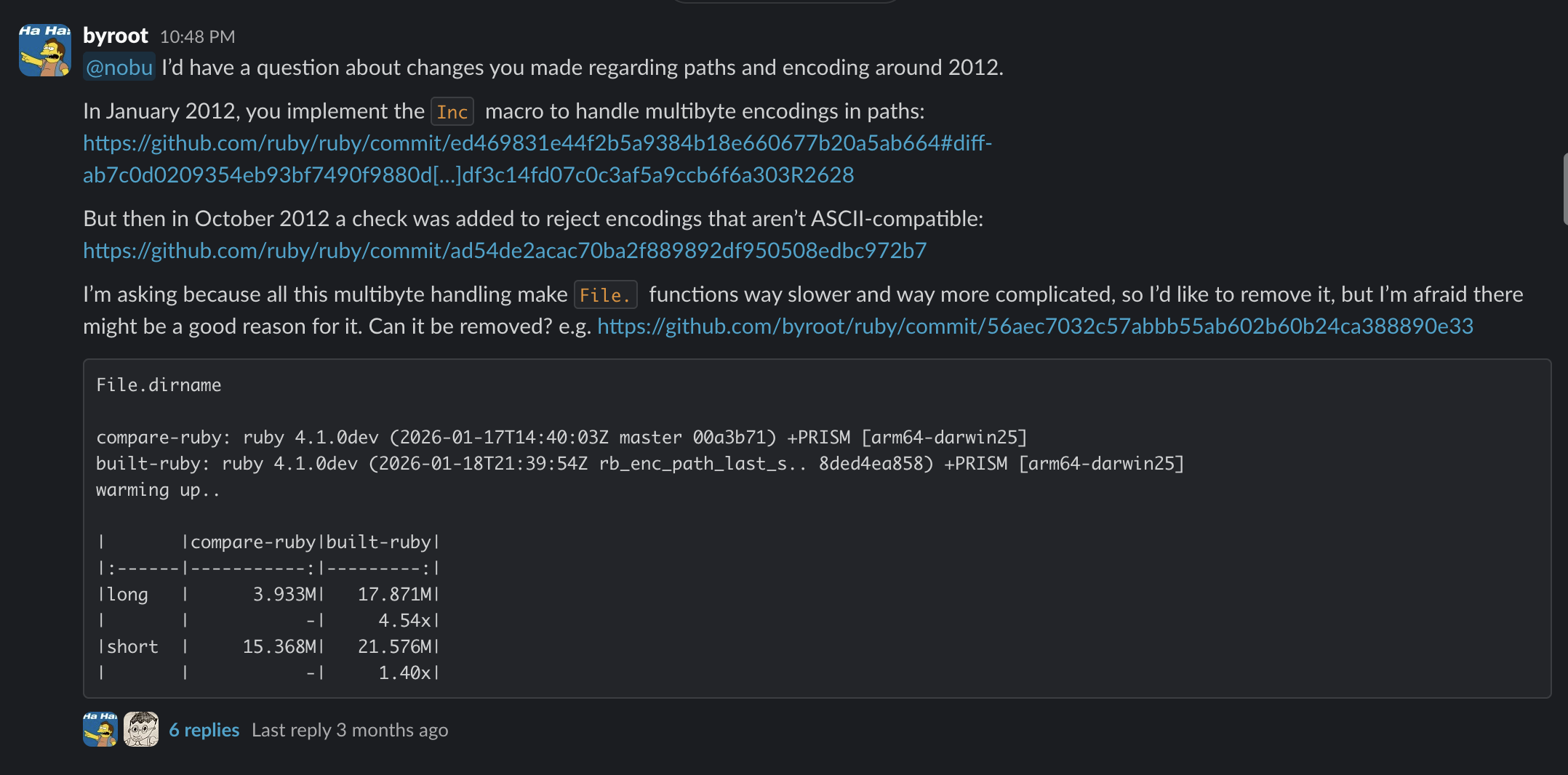

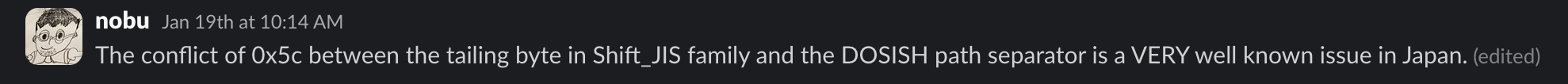

In high-parallelism CI setups like Intercom’s monolith, which runs 1350 workers by default, setup time crushes overall performance. Shave one second off worker setup, and you save 1350 seconds—or 22.5 minutes—per build. With test suites that once took an hour serially now parallelized aggressively, fixed setup costs dominate. A 1-minute setup balloons total time from 15 minutes (4 workers) to 16 minutes, while 60 workers drop it to 2 minutes. Half your compute idles, costs spike, and engineers wait longer.

This matters because parallelism’s gains vanish without fast setups. Intercom’s team targeted boot time after tackling slow tests and factories. Ruby boots involve loading hundreds of files via require or load, each triggering a linear search across $LOAD_PATH. In large monoliths, $LOAD_PATH holds dozens of directories—gems, app dirs, engines. Each search scans them sequentially until it finds a match or fails.

Ruby’s Load Path Search: Pure Inefficiency

Ruby’s core does this naively. Here’s the pseudocode equivalent of what happens internally:

def search_load_path(feature)

if path.end_with?(".rb", ".so")

$LOAD_PATH.each do |load_path|

absolute_path = File.join(load_path, feature)

return absolute_path if File.exist?(absolute_path)

end

end

nil

endFor every require 'some_gem', Ruby iterates the entire $LOAD_PATH array. Repeat this for 10,000+ files during boot (common in Rails monoliths), and with 50+ load paths, you’re burning CPU cycles on redundant File.exist? calls. Most files resolve early, but misses or late matches hurt. Benchmarks show this alone adds 100-500ms to boots in big apps—multiplied by 1350 workers, that’s hours of aggregate waste.

Why does Ruby do this? Historical reasons: $LOAD_PATH is a simple Array, modifiable at runtime. Changing it to a Hash breaks compatibility. MRI devs prioritize stability over micro-optimizations here.

Bootsnap’s Targeted Fix

Enter Bootsnap, bundled in Rails since 2015 but widely used beyond. It doesn’t rewrite Ruby; it observes and caches. For load paths, Bootsnap builds an in-memory Hash at startup: feature name to absolute path. First boot scans $LOAD_PATH once, resolves common paths, and stores hits. Subsequent requires hit the cache in O(1) time.

It monkey-patches Kernel#require and friends with a resolver that checks the cache before falling back to linear search. Cache invalidates on $LOAD_PATH changes or file mods (via inode checks). Result: 20-50% boot speedups in gem-heavy apps, per real-world benchmarks from Shopify and GitHub users.

Intercom measured gains directly: Bootsnap tweaks dropped setup by seconds. Skeptical note—it’s not universal magic. Small apps see minimal wins; cold boots still scan. Pair it with Bundler’s --frozen or precompiled gems for max effect. Alternatives like Zbatery or custom resolvers exist, but Bootsnap’s battle-tested and zero-config.

Broader implications hit finance and ops. CI costs scale with parallelism—AWS spot instances at $0.10/hour per worker mean 1350 idling seconds cost real money. Faster setups let you push parallelism harder without bill shock. For crypto/security firms running Rails monoliths (yes, some do for dashboards), this slashes audit times and vuln scans. Devs iterate faster, shipping secure code sooner.

Don’t stop here. Profile your boots with stackprof or ruby-prof:

$ gem install stackprof

$ stackprof tmp/boot.prof --text --limit 10Target load_path.resolve_feature if it’s hot. Enable Bootsnap’s compile_cache_iseq: true for bytecode caching too. In 2023, with Ruby 3.2+ YJIT, combine for sub-second boots. Test it: your 1350 workers (or even 10 on a laptop) will thank you.