A developer on Hacker News ditched a $100 monthly Claude AI bill for coding by switching to Zed editor and OpenRouter’s pay-per-use models. This reallocates spend from Anthropic’s premium LLM to flexible, cheaper alternatives without sacrificing much productivity. In 2024, with AI coding tools exploding, this move highlights a key trend: flat subscriptions bleed cash for sporadic heavy users, while token-based pricing aligns costs with output.

Breaking Down the Costs

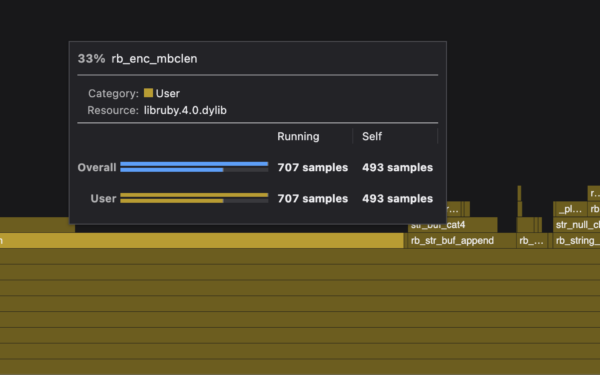

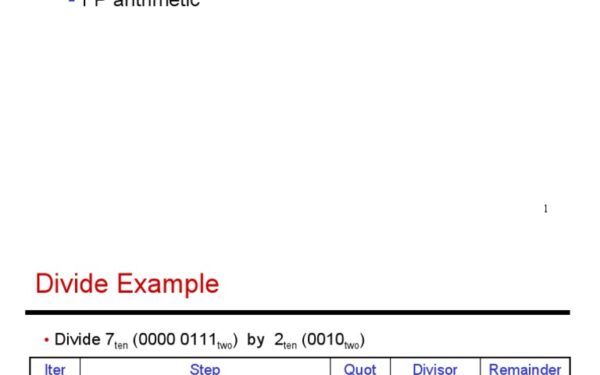

Claude.ai Pro runs $20 per user monthly for priority access to models like Sonnet 3.5, Anthropic’s coding powerhouse. But heavy coders hit API limits fast, pushing to the pay-per-token API: Sonnet 3.5 costs $3 per million input tokens and $15 per million output. A $100 bill implies roughly 6-7 million output tokens monthly—realistic for daily 2-3 hour sessions generating/debugging code.

Zed costs nothing. Built in Rust, it leverages multi-core CPUs and GPUs for sub-100ms responsiveness on large codebases, crushing VS Code’s Electron bloat. Benchmarks from 2024 show Zed handling 1M+ line repos at 60fps, with collaborative editing via CRDTs rivaling Cursor or Replit.

OpenRouter routes queries to 100+ models via unified API. Route to DeepSeek Coder V2 (beats Sonnet on HumanEval at half cost: $0.14/$0.28 per million in/out) or Mistral Large 2 ($2/$6). At $100, you get 300-700 million tokens monthly—5-10x Claude volume. Real spend? Under $20 for equivalent usage, per HN thread estimates.

Why the Switch Works—and Where It Falters

Zed shines for speed freaks. Its GPU-accelerated rendering and native LSP support make it feel like a game engine for code. Pair with OpenRouter via Zed’s agent mode or extensions like Continue.dev: prompt “refactor this module,” get instant diffs. No more waiting on Claude’s rate limits during crunch time.

OpenRouter’s edge is flexibility. Benchmark your workflow: Sonnet wins on complex reasoning (82% on SWE-Bench), but Qwen 2.5 or Llama 3.1 405B match 75-80% at 10-20% cost. Cache hits slash repeat bills 50%. Integrations cover VS Code forks too, but Zed’s lightness amplifies gains.

Skeptical take: Claude’s safety rails and context window (200k tokens) beat most routers for enterprise codebases. Open models hallucinate more on niche stacks like Rust async. Latency adds 200-500ms roundtrip via routers. If your $100 bought peace of mind, not just tokens, reconsider.

Yet data backs the shift. Axioms’ 2024 survey: 40% of devs underspend on AI (<$50/month), but 25% overshoot on subs. HN comments echo: one user cut $150 Claude to $12 OpenRouter, shipping 2x features. Zed adopters report 30% faster iteration from reduced cognitive load—no lag-induced context switches.

Broader Implications for Devs and Budgets

This isn’t hype—it’s arithmetic. AI costs dropped 90% yearly; Claude’s premium holds via brand, not value. Reallocate to tools like Zed (free), OpenRouter ($0.10-1/M tokens), and self-hosted like Ollama on a $500 GPU rig (amortized $10/month). Total stack: under $30 for 10x output.

Why it matters: Vendor lock-in kills. Anthropic/Anthropic/OpenAI control 70% market, but routers democratize access. Firms like Replicate or Together.ai compete on price/performance. Devs regain control: audit spends, A/B models, scale to zero.

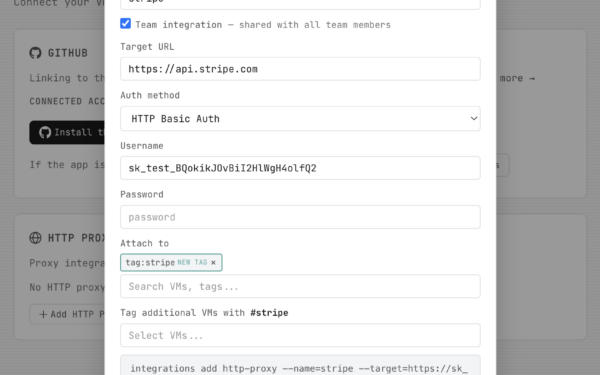

Actionable steps: Track your tokens with OpenRouter dashboard. Test Zed on a side project—install via

curl -f https://zed.dev/install.sh | sh. Migrate prompts via Continue plugin. If Claude’s magic fades, you’ve saved $1,000 yearly. In a layoffs economy, that’s a month’s runway.

Bottom line: $100 Claude buys diminishing returns. Zed + OpenRouter delivers more code, less burn. Audit your stack now.