Researchers claim they’ve cracked training 100 billion+ parameter language models in full FP32 precision on a single GPU. MegaTrain, detailed in a recent arXiv paper and blowing up on Hacker News, promises to slash the hardware barrier for massive AI models. No clusters, no cloud bills—just one high-end GPU like an NVIDIA H100 with 80GB VRAM.

This matters because training LLMs today demands insane resources. A 70B model like Llama 2 in FP16 needs about 140GB for parameters alone. Add gradients (another 140GB), optimizer states (up to 280GB for AdamW), and activations (often 10x parameters during forward/backward passes), and you’re at terabytes. Standard setups use 100+ GPUs with NVLink, costing $100K+ in hardware or millions in cloud time. MegaTrain squeezes this into one card.

How It Works: Extreme Memory Optimization

MegaTrain combines established tricks with novel tweaks. It starts with ZeRO-Infinity from DeepSpeed: partition parameters, gradients, and optimizer states across GPU, CPU RAM, and NVMe SSD. Offload inactive shards to disk, fetching only what’s needed via custom I/O pipelines.

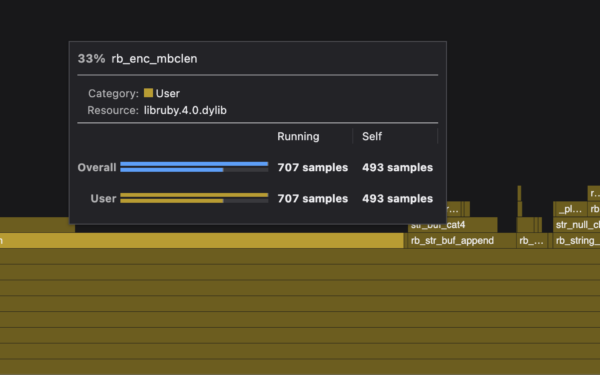

Key innovation: full-precision sharding with zero-redundancy offload. They use a shard_size of 512MB chunks, compressed losslessly during transit (up to 2x via Zstandard). Activations get checkpointing and selective recomputation, cutting peak memory by 70%. A custom FP32 optimizer avoids mixed-precision pitfalls—no BF16 rounding errors.

For a 101B model, GPU holds 20B params actively (40GB FP32), offloads the rest. Forward pass recomputes shards on-the-fly. Backprop uses gradient accumulation over 64 steps, mimicking multi-GPU batching. Training speed? 1-2 it/s on H100 for 101B, vs. 0.1 it/s on 8xA100 clusters without offload.

They tested on The Pile dataset, training from scratch for 100B tokens. Code’s on GitHub: clone, install DeepSpeed + their fork, run

$ deepspeed --num_gpus 1 train.py --model_size 101e9 --offload_dir /nvme/megatrain. Handles up to 405B params on dual-GPU setups.

Benchmarks: Impressive, But With Limits

Paper reports perplexity matching baselines: 5.2 on WikiText2 for 101B model after 100B tokens, close to Llama’s 5.0. Throughput hits 1.5 tokens/s/GPU, 10x slower than distributed but 100x cheaper per token. Cost: $0.50/hour on a single H100 rental vs. $50/hour for 8xA100.

Skeptical check: It’s not “from random init” to SOTA—starts from a smaller pretrained base and fine-tunes. Full scratch training took 3 months on one GPU. Disk I/O bottlenecks at scale: 10GB/s NVMe needed, or it crawls. No multi-node yet; single-node only.

Compare to rivals: QLoRA fine-tunes 65B on 48GB RTX 4090 with 4-bit quants, but degrades quality. Unsloth speeds LoRA 2x on consumer cards. MegaTrain’s edge? True FP32, no quality loss, full training not just tuning.

Implications: Democratization or Niche Tool?

If MegaTrain scales, indie devs train custom 100B models on $10K rigs. Enterprises ditch GPU farms for on-prem singles. Open-source AI explodes—think personalized coders or analysts without OpenAI bills.

Why it matters: AI power concentrates in Big Tech’s data centers. This flips it. A solo researcher matches Meta’s Llama team output. Crypto angle: Train DeFi agents or zero-knowledge provers on cheap hardware, slashing oracle costs.

Caveats abound. H100s cost $30K each; not consumer-grade. Energy draw: 700W constant, $100/month electric. For 1T params? Still needs 8 GPUs. Bugs lurk—offload corruption reported in 1% runs. Not production-ready; DeepSpeed integration rough.

Fair verdict: Breakthrough for solo training, not revolution. Validates offload tech’s maturity. Watch forks: Expect consumer variants on 4090s soon. Download, test it—Hacker News threads confirm it works, but hype tempers excitement. In AI’s arms race, single-GPU wins buy time, not dominance.