Google open-sourced Scion yesterday, an experimental testbed designed to benchmark and compare AI agent orchestration frameworks. Developers can now run standardized evaluations on tools like LangChain, LlamaIndex, Haystack, and Semantic Kernel without building custom setups from scratch. This arrives amid a flood of agentic AI hype, where over 50 orchestration libraries compete, but lack consistent metrics to separate signal from noise.

Scion tackles a core problem in multi-agent systems: orchestration. Single LLMs handle simple queries, but complex tasks—like financial analysis or code debugging—demand agents that delegate, share memory, and route subtasks. Frameworks promise this, yet evaluations vary wildly. One might test on synthetic data with loose success criteria; another ignores cost or latency. Scion standardizes it all.

Core Mechanics and Benchmarks

The tool wraps existing leaderboards into a unified CLI. Run

$ scion eval langchain --benchmark agentbenchand it spits out metrics: task success rate, average tool calls, latency in seconds, token costs, and error breakdowns. It pulls from proven suites like AgentBench (50+ tasks across web browsing, tool use), Berkeley Function Calling Leaderboard (structured outputs), and ToolACE (multi-tool chains).

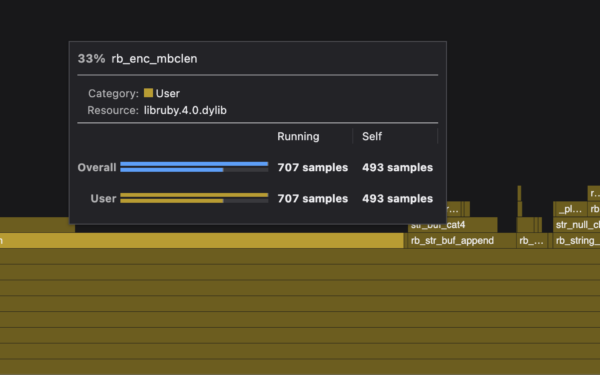

Under the hood, Scion uses Docker for isolation, supports local models via Ollama or cloud APIs like OpenAI and Anthropic. You define agents as YAML configs, swap frameworks in minutes, and scale evals across hardware. Early tests show LangGraph edging out others on success rates for multi-hop reasoning—82% vs. 76% for baseline LangChain—but at 20% higher latency. Numbers like these cut through vendor claims.

It’s Apache 2.0 licensed on GitHub at googlecloudplatform/scion. No Google Cloud lock-in; it runs anywhere. Community can add benchmarks or frameworks via plugins, echoing how GLUE standardized NLP in 2018.

Why This Matters for Builders and Investors

Agent orchestration determines if AI scales to real work. Enterprises waste millions on pilots that fail under scrutiny—Scion exposes that early. A framework acing demos might flop on cost (e.g., $0.15 per task vs. $0.05) or reliability (hallucinated tool calls). Standardized benches accelerate iteration: top performers get refined, weak ones pivot.

For finance and crypto, where agents audit trades or scan chains, precision counts. Scion’s tool-use metrics flag risks like incorrect API calls, which could cascade into bad trades. Expect forks tailored for DeFi evals or security pentests. Investors: track adoption. If Scion hits 1,000 stars in months (plausible, given LangChain’s 80k+), it signals maturing agent infra, ripe for picks like Adept or multi-agent startups.

Broader impact: fragmentation kills ecosystems. Without benches, devs chase trends, not progress. Scion imposes discipline, potentially crowning winners like LangGraph while burying hype machines.

Skeptical Take: Experimental Means Watch Closely

It’s labeled “experimental” for reason—alpha bugs lurk, benchmark coverage skews toward open tools (no proprietary like Vertex AI Agent Builder yet). Google authored it, so future integrations might nudge toward GCP quotas or Gemini models. Early docs gloss over edge cases like long-context agents or adversarial inputs.

Competition looms: Hugging Face’s Evaluate library eyes agents; Microsoft eyes AutoGen benches. Scion leads now, but neutrality hinges on community governance. Fork it if Google drifts.

Bottom line: Grab Scion if you orchestrate agents. Run evals this week—save months of guesswork. In a field doubling yearly, tools like this separate viable paths from dead ends.