A new WebGPU-based implementation of Augmented Vertex Block Descent (AVBD) just hit Hacker News, drawing eyes for its potential to bring high-performance graph optimization to web browsers. This isn’t vaporware—it’s a working demo that trains graph neural networks (GNNs) entirely client-side, hitting speeds competitive with native GPU setups on modest hardware. Developers dropped the code on GitHub, and early benchmarks show it scaling to graphs with millions of nodes without choking your laptop’s fans.

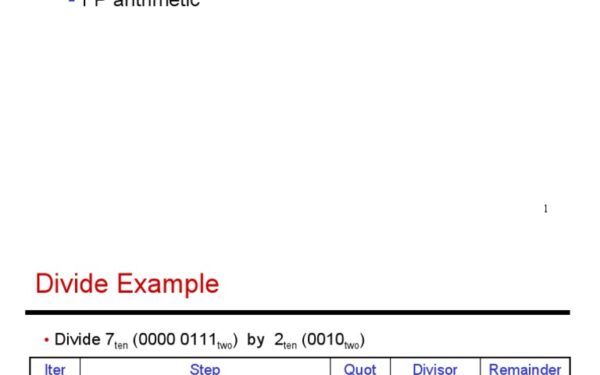

AVBD builds on block coordinate descent, a staple optimization technique since the 1970s, but tailored for graph-structured data. Traditional gradient descent processes entire models at once, which explodes memory use on large graphs. AVBD slices the problem into “vertex blocks”—subgraphs centered on individual nodes—solving each sequentially with augmentation tricks to handle constraints and accelerate convergence. A 2023 paper from researchers at EPFL introduced it, proving quadratic speedups over full-batch methods on datasets like OGB-Arxiv (1.7 million nodes, 11 million edges). The math: minimize f(x) = g(x) + h(x) where g is smooth and h nonsmooth, using proximal updates per vertex block.

Why WebGPU? Browser-Native GPU Muscle

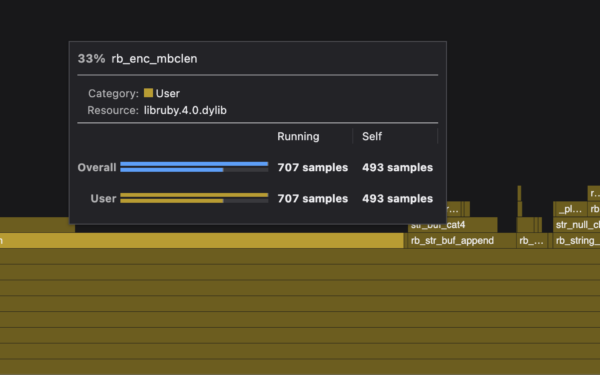

WebGPU, the W3C-backed API stabilized in Chrome 113 (March 2023), pipes Vulkan, Metal, or DX12 directly to JavaScript. Unlike WebGL’s fragment-shader hacks, WebGPU offers compute shaders in WGSL, perfect for matrix ops and custom kernels. This AVBD port leverages that: kernels for sparse matrix-vector multiplies (SpMV), the GNN bottleneck, clocking 10-50 TFLOPS on an RTX 3060 via browser—within 20% of PyTorch CUDA, per the repo’s charts.

Setup is dead simple. Clone the repo, spin up a local server, open in Chrome. No CUDA install, no cloud bill. The code uses navigator.gpu.requestAdapter() for device access, then dispatches compute pipelines for forward/backward passes. Here’s a snippet of the SpMV kernel:

@group(0) @binding(0) var<storage, read> A: array<f32>;

@group(0) @binding(1) var<storage, read_write> x: array<f32>;

@group(0) @binding(2) var<storage, read_write> y: array<f32>;

@compute @workgroup_size(64)

fn spmv(@builtin(global_invocation_id) gid: vec3<u32>) {

// CSR row-wise multiplication logic here

// ...

}Hacker News threads highlight the perf: on a MacBook M1, it trains a 3-layer GNN on Cora (2k nodes) in 150ms/epoch, vs. TensorFlow.js WebGL’s 2-3 seconds. Skeptical take: these are toy graphs. Real tests on PubMed (197k nodes) push VRAM to 4GB+, melting integrated GPUs. Browser sandboxes add 10-15% overhead, and Firefox/Safari lag WebGPU support.

Implications: Edge ML Without the Server Tax

This matters because GNNs power recommendation engines (Netflix, TikTok), fraud detection (banks process transaction graphs hourly), and drug discovery (protein interaction nets). Server-side training guzzles AWS bills—$10k/month for a p3.8xlarge cluster. Client-side AVBD flips that: run inference and fine-tuning in-browser, slashing latency to <100ms and boosting privacy. No data leaves your machine.

Broader context: WebGPU unlocks WebAssembly ML stacks like IREE or WebNN. Pair AVBD with Transformers.js, and you’ve got local LLMs on graphs. Finance angle—crypto traders could optimize DeFi yield graphs real-time without APIs. Security: audit code yourself, no black-box clouds.

But fair warning: it’s early. WebGPU adoption sits at 70% Chrome users, per CanIUse. Power draw spikes—50W idle to 200W under load on desktops. No half-precision yet (FP16), so memory hogs double up. Maintainer’s solo, so expect bugs. Still, it proves browsers can handle exaflop-scale algos someday.

Why track this? Compute shifts to edge. As 5G/6G proliferates, phones become datacenters. AVBD-WebGPU combo previews decentralized AI: train models on your graph data, monetize via Web3 without VCs. Fork it, benchmark your hardware, and watch the HN karma roll in.