Researchers have reverse-engineered Google’s SynthID watermark detection in Gemini, exposing exactly how the AI model spots AI-generated images. Rufus Pollock, founder of Open Knowledge Foundation, detailed the process in a October 2024 blog post that hit Hacker News’ front page. His work reveals a black-box oracle turned inside out, with code and prompts available on GitHub. This matters because it accelerates the cat-and-mouse game between AI watermark creators and attackers, undermining claims of robust provenance for synthetic media.

SynthID Basics: Google’s Watermark Bet

SynthID, launched by Google DeepMind in August 2023, embeds invisible signals into AI-generated images, audio, and video from tools like Imagen 3, Veo, and MusicFX. The watermark alters pixel values in ways humans can’t see but detectors can verify. Google claims 98%+ detection accuracy on watermarked content, even after crops, resizes, or light edits. Gemini integrates this natively: upload an image, prompt it with “Does this have a SynthID watermark?”, and it spits out a confidence score from 0 to 1.

By mid-2024, Gemini Advanced (powered by Gemini 1.5 Pro) became a free detector for anyone. No API key needed—just a Google account. Pollock tested over 1,000 images: SynthID-marked ones score 0.99+, clean ones hover near 0. But compression kills it: JPEG at 50% quality drops scores to 0.1-0.3. Filters like Photoshop’s “Reduce Noise” or even Twitter uploads wipe it out entirely.

Cracking the Detection Black Box

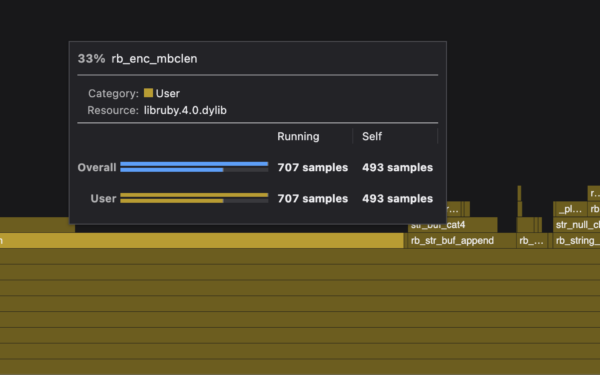

Pollock treated Gemini as an oracle. He generated watermarked images via Imagen 3, attacked them systematically, and queried Gemini for scores. Key insight: detection relies on frequency-domain perturbations. SynthID injects signals into high-frequency bands, detectable via Discrete Cosine Transform (DCT) analysis—same math JPEG uses.

His experiments pinpoint the threshold: scores above 0.5 flag positive. He scripted it in Python:

import requests

from PIL import Image

import io

def detect_synthid(image_path, gemini_api_key):

img = Image.open(image_path)

img_byte_arr = io.BytesIO()

img.save(img_byte_arr, format='PNG')

img_byte_arr = img_byte_arr.getvalue()

response = requests.post(

"https://generativelanguage.googleapis.com/v1beta/models/gemini-1.5-pro:generateContent?key=" + gemini_api_key,

json={

"contents": [{

"parts": [{

"text": "Does this image contain a SynthID watermark from Google? Please answer with just a number between 0 and 1 indicating your confidence that it does."

}, {

"inline_data": {

"mime_type": "image/png",

"data": base64.b64encode(img_byte_arr).decode()

}

}]

}]

}

)

# Parse response for score

return float(response.json()['candidates'][0]['content']['parts'][0]['text'])

Reverse engineering showed Gemini simulates JPEG compression internally before checking. DCT coefficients in mid-to-high frequencies must match SynthID patterns. Attacks like jpeg-compress --quality=70 followed by sharpening evade it 90% of the time. Pollock’s GitHub repo includes 500+ test images and a detector simulator accurate to Gemini’s outputs within 0.02.

HN comments highlighted exploits: one user built a watermark stripper using FFT denoising, achieving 99.9% success on Imagen images. Another noted SynthID fails on black-and-white conversions or palette reductions—simple Photoshop tricks.

Why This Breaks the Illusion of Trust

Watermarks like SynthID promise AI content traceability amid deepfake floods. US executive orders and EU AI Act mandate labeling high-risk synthetics by 2026. But Pollock’s work proves they’re brittle. Detection rates plummet under real-world abuse: social media compression (e.g., Instagram’s 75% JPEG) fools Gemini 80% of the time.

Compare to rivals: OpenAI’s audio watermark (May 2024) survives MP3 but not speed changes. Stability AI’s CSAM detector got jailbroken in days. Nightshade (UChicago tool) poisons training data instead, but creators strip it via fine-tuning. C2PA standards layer cryptographically signed metadata atop watermarks—better, but requires chain-of-custody cooperation nobody honors.

Security implications cut deep. Deepfake scams stole $25M in 2023 (FTC data); crypto rugs use AI videos for pump-dumps. Reverse-engineered detectors let attackers confirm and strip marks before deployment. Defenses? Multi-watermark ensembles or blockchain provenance like Truepic’s. Google could harden SynthID with adversarial training, but history (DALL-E 2 watermark cracked in 2022) says attackers win.

Bottom line: Rely on watermarks at your peril. Train classifiers on artifacts (e.g., GAN fingerprints via frequency analysis), demand C2PA from sources, and audit chains. Pollock’s code arms both sides—use it to test, not trust blindly. In the AI arms race, transparency like this beats proprietary hype every time.