NVIDIA just dropped Isaac GR00T N1.7 in early access: a 3-billion-parameter vision-language-action (VLA) model for humanoid robots. It’s open-source with commercial licensing, live on Hugging Face and GitHub. The hook? It trains on over 20,000 hours of human egocentric video instead of pricey robot teleoperation data. This claims to scale dexterity predictably, targeting factory tasks like material handling, packaging, and inspection.

Why lead with this? Humanoid robots remain bottlenecked by data. Teleoperating robots for hours costs thousands per session—N1.6 needed a few thousand hours of that. GR00T N1.7 flips it: EgoScale dataset packs 20,854 hours of human wrist-cam and ego video across 20+ categories, from manufacturing to healthcare. Humans manipulate objects with two hands from a first-person view, mirroring robot embodiments. NVIDIA says this delivers “manipulation priors” without robot hardware upfront, and they’ve coined a “dexterity scaling law”: more human data equals better finger-level control for fragile parts or assembly.

Core Architecture and Capabilities

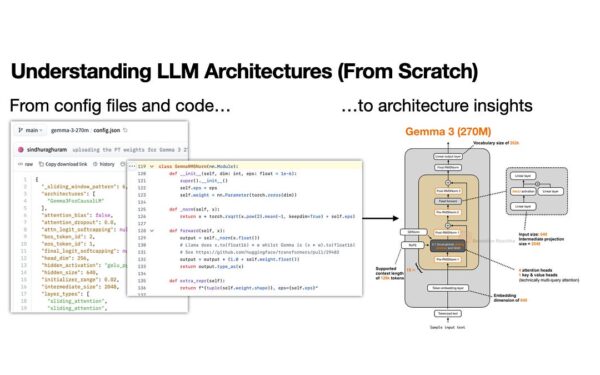

GR00T N1.7 uses an Action Cascade: splits reasoning from execution. System 2, a 2B-parameter Cosmos-Reason2 VLM, chews RGB images (any resolution), language instructions, and robot state (joints, velocities, end-effector poses). It spits high-level action tokens with task decomposition for multi-step workflows—think planning subtasks reliably.

System 1, a 32-layer Diffusion Transformer (DiT), grabs those tokens plus live proprioception and denoises into precise, continuous motor commands. Outputs map directly to degrees of freedom. Tested on Unitree G1 for loco-manipulation, YAM bimanual for tabletops, and AGIBot Genie 1 for dexterous two-hand work. Supports LeRobot dataset format for easy integration.

Skeptical check: Videos look smooth in demos, but real factories throw curves—variable lighting, occlusions, wear. NVIDIA validates on specific hardware, but cross-robot transfer? Human-to-robot gaps persist: our fingers aren’t robotic grippers. Still, commercial licensing green-lights production deploys now, unlike pure research models.

Training Shift and Scaling Evidence

Egocentric video pre-training beats teleop scale. Prior models scraped thousands of robot hours; GR00T N1.7 ingests 20k+ human hours cheaply. Sensorized data (hand tracking, wrist cams) teaches contact-rich skills: pinching small parts, handling breakables. NVIDIA plots a scaling law—dexterity metrics climb linearly with data volume, no teleop needed.

This matters for economics. Teleop runs $10k+ per robot-month. Human video? Scrape existing datasets or record cheaply. Open-weights accelerate iteration: tweak for your humanoid, fine-tune on proprietary data. GitHub repo includes training scripts; Hugging Face hosts the model.

Implications for Robotics and Markets

Humanoids hit factories soon—Figure’s 01, Tesla’s Optimus Gen 2, Apptronik’s Apollo target 2025 pilots. Dexterity lags locomotion; GR00T N1.7 closes that with finger control and reasoning. Factories save on labor: one humanoid at $50k/year vs. human wages, if uptime hits 80%.

But fair skepticism: Human data transfers imperfectly—robots lack our haptics, compliance. Scaling law holds in sims; real-world sim-to-real gaps kill 50% of policies. NVIDIA’s Isaac ecosystem (Project GR00T, Omniverse) locks in users, but open-source invites forks. Watch adoption: if N1.7 boosts success rates 2x on multi-step tasks, it slashes dev timelines from years to months.

Bottom line: GR00T N1.7 democratizes humanoid smarts. Grab it, test on your bot—could tip robotics from labs to lines. Track N1.8 for teleop hybrids or larger scales.