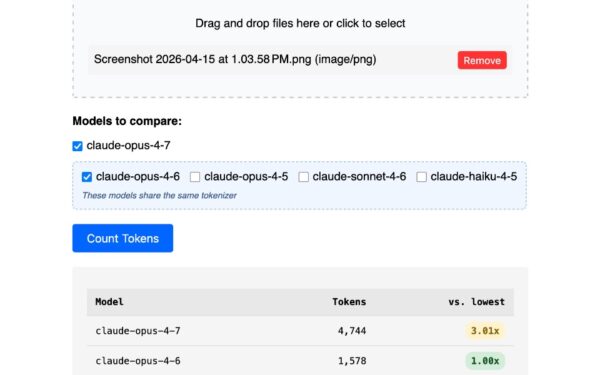

NVIDIA just dropped Nemotron OCR v2, a multilingual OCR model that crushes non-English languages with Normalized Edit Distance (NED) scores of 0.035–0.069. They trained it on 12 million synthetic images across six languages, hitting 34.7 pages per second on a single A100 GPU. The dataset and model are public on Hugging Face—nvidia/OCR-Synthetic-Multilingual-v1 and nvidia/nemotron-ocr-v2. Try it in your browser via their demo. This sidesteps the usual OCR data headaches: small benchmarks, costly annotations, noisy web scrapes.

The OCR Data Trap

High-quality OCR demands millions of images with precise bounding boxes, transcriptions, and reading order at word, line, and paragraph levels. Benchmarks like ICDAR or Total-Text top out at tens of thousands of images, mostly English or Chinese. Manual labeling delivers perfection but costs a fortune and crawls at scale—think $0.10–$1 per image, ballooning to millions for robust training.

Web-scraped PDFs promise volume but deliver garbage: text as stroke fragments, baked-in images, or botched scans with flaky OCR layers. Filtering helps, but noise lingers. Nemotron OCR v1 nailed English on their SynthDoG benchmark but bombed multilingual tasks, exposing the gap. Architecture tweaks alone won’t fix lacking data diversity.

Synthetic Data Breaks the Cycle

Synthetic generation renders text onto images programmatically. You control everything: layouts, fonts, colors, backgrounds, distortions. Labels are flawless because the system knows exactly what it drew—bounding boxes, sequences, hierarchies intact. Scale to billions if your compute holds.

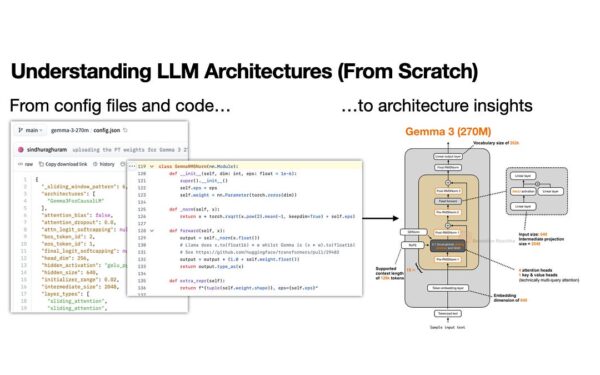

The realism hurdle? Tricky, but NVIDIA’s pipeline randomizes fonts (thousands available per language), layouts (paragraphs, tables, handwriting sims), augmentations (blur, noise, perspective), and backgrounds (scans, photos). Their shared detection backbone feeds both recognizer and relational model, slashing redundant compute for speed.

This isn’t hype—12 million images dropped NED from 0.56–0.92 to 0.035–0.069 on non-English. Pipeline works for any language with fonts and text corpus, no scraping required.

Performance and Proof

Speed: 34.7 pages/second on A100 beats many rivals. Architecture reuses features, key for edge deployment. Accuracy holds on SynthDoG, their synthetic document benchmark mimicking real chaos.

Skeptical check: Synthetic data risks overfitting to artifacts, failing wild real-world docs. But public assets let you test—run your PDFs through the demo or fine-tune. v1’s multilingual flop underscores data’s primacy over arch. Here, data volume and quality align.

Why This Shifts the Game

Multilingual OCR unlocks global digitization: contracts, IDs, archives in Hindi, Arabic, Thai. Traditional paths lock out all but big players. Synthetics level it—generate datasets overnight on consumer GPUs if you script it right.

Implications run deep. Enterprises automate workflows cheaper; researchers benchmark without annotation farms. Security angle: better OCR aids forensics, compliance scanning. NVIDIA’s open release invites scrutiny and iteration—fork it, beat it.

Tradeoff? Upfront pipeline build. But once tuned, it outpaces humans or scrapers. If it generalizes as claimed, expect synthetic data to dominate vision tasks beyond OCR. Verify yourself; the bits are free.