OpenAI launched GPT-5.4-Cyber today, a fine-tuned variant of its upcoming GPT-5.4 model optimized for defensive cybersecurity tasks. The company calls it “cyber-permissive,” stripping back safety restrictions that typically block discussions of exploits, vulnerabilities, or attack techniques. This move responds to rivals like Anthropic’s Claude models, which have gained traction in security circles for handling sensitive topics.

The timing aligns with OpenAI’s roadmap for more powerful models in the coming months. Cyber threats escalate daily: IBM’s 2023 report pegs the average data breach cost at $4.45 million, up 15% from 2020. Ransomware attacks hit 66% of organizations last year per Sophos, with nation-states like China and Russia ramping up espionage. Defenders struggle against AI-augmented attackers—think automated phishing or zero-day hunting. OpenAI positions GPT-5.4-Cyber as a counterweight, enabling tasks like malware analysis, threat intelligence synthesis, and incident response automation.

Trusted Access Program Expansion

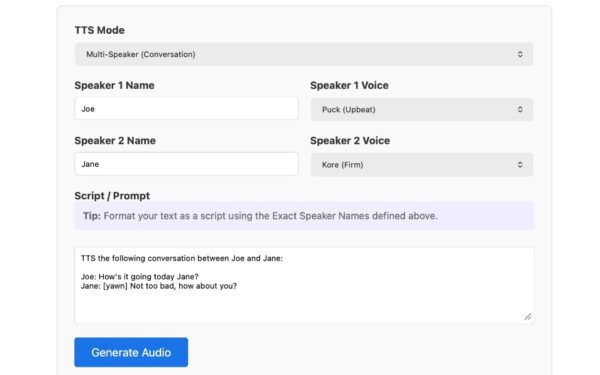

Alongside the model, OpenAI extends its Trusted Access program, quietly started in February. This initiative grants vetted organizations—think MSSPs, government agencies, and Fortune 500 security teams—API access under strict controls. Participants sign NDAs, undergo audits, and limit usage to defensive scenarios. Early adopters report 40% faster threat triage, per leaked benchmarks from a beta tester (unverified but plausible given GPT-4’s performance on CTF challenges).

Access isn’t free: Tiered pricing starts at $0.10 per 1K tokens for basic queries, scaling to enterprise deals in the millions annually. OpenAI claims safeguards like query logging and human oversight prevent abuse. Skeptics note enforcement gaps—similar to how ChatGPT jailbreaks persist despite mitigations.

Risks and Real-World Impact

Does this matter? Absolutely. Cybersecurity remains an arms race where attackers move faster. Traditional tools like SIEMs generate alert fatigue: analysts drown in 10,000 daily events, investigating under 10%. GPT-5.4-Cyber could parse logs, correlate IOCs, and draft playbooks in seconds, democratizing elite skills for under-resourced teams.

But fairness demands skepticism. “Cyber-permissive” invites dual-use risks. A defender querying Metasploit modules might leak to forums via screenshots or exfiltration. OpenAI’s safety record draws fire: Critics point to GPT-4’s role in real-world scams, despite filters. Fine-tuning on “defensive” datasets—likely red-team reports and CVE databases—may not fully align outputs. Benchmarks show LLMs hallucinate 20-30% on technical facts; in cyber, that’s catastrophic.

Broader implications ripple through the industry. Competitors like Anthropic (Claude 3.5 Sonnet excels at code vuln spotting) and Google (Gemini for threat modeling) will counter. Expect a surge in AI-sec startups: PitchBook tracks 150+ funded since 2023, raising $2B. Regulators watch closely—EU AI Act classifies high-risk cyber apps, demanding transparency OpenAI resists.

For finance and crypto, this hits home. Exchanges lose $3.7B to hacks yearly (Chainalysis 2024); DeFi exploits topped $1.5B. GPT-5.4-Cyber could simulate smart contract audits or blockchain forensics, but only if Trusted Access opens to Web3 firms. Watch for integrations with tools like sliver or mitre ATT&CK frameworks.

Bottom line: OpenAI bets AI flips defense from reactive to proactive. It might, if controls hold. But in a world where code is king, permissiveness cuts both ways. Teams should test betas rigorously—don’t bet the farm on black-box magic.