Anthropic’s Claude Mythos Preview promises a breakthrough in AI-driven cybersecurity, claiming the model uncovers thousands of zero-day vulnerabilities across major operating systems and browsers. Scrutinize their 244-page system card, however, and the evidence crumbles. The document, a bloated 23MB PDF that compresses to 3MB without losing data, allocates just seven pages to why the model is too risky to release fully. The cybersecurity section (pages 47-53) offers zero vulnerability counts, no CVE lists, no CVSS scores, no CWE mappings, and no vendor confirmations. The word “thousands” appears once—referring to alignment transcripts, not exploits.

This gap between headlines and substance matters because AI safety hinges on verifiable claims. Anthropic teases massive threat detection but provides no baseline comparisons, independent reproductions, or false-positive rates. Security pros rely on metrics like CVSS distributions and disclosure timelines to assess real impact. Without them, it’s hype, not intelligence. Fuzzers—tools that probe software for crashes—go unmentioned, despite being the gold standard for zero-day hunting. Established players like Google’s OSS-Fuzz have logged millions of crashes since 2016, leading to over 2,000 CVEs. Anthropic’s card skips such rigor.

Demo: Patched Bugs in a Stripped Environment

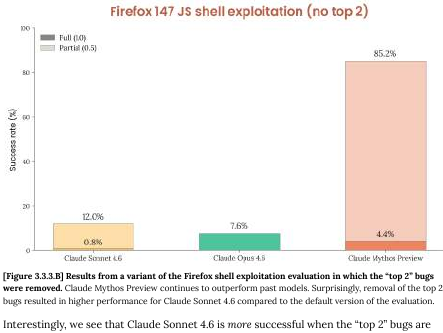

The flagship demo fares worse. Claude Mythos “weaponizes” two bugs discovered by a different model. Target? Patched software running in a test setup with browser sandboxes and defense-in-depth defenses disabled. Real-world browsers like Chrome employ site isolation and renderer hardening, blocking 99% of exploits before they chain, per Google’s 2023 security report. Anthropic stripped these, flipped failure into “success,” and called it a step change. No Glasswing partners—supposedly collaborating on disclosures—have confirmed a single finding publicly.

Why does this erode trust? Demos without mitigations mislead on capabilities. Recall Heartbleed (CVE-2014-0160), a 2014 zero-day that evaded fuzzers until manual review. True advances, like Microsoft’s AutoTriage or DeepMind’s 2022 work on Chromium fuzzing, publish raw data and reproductions. Anthropic’s approach echoes early AI hype: impressive videos, scant code.

Funding Facade and Regulatory Risks

The “$100 million defensive initiative” reveals more sleight of hand: $4 million in cash, padded with $100 million in API credits for testers—essentially subsidizing Anthropic’s own product use. Their 90-day public report remains MIA, leaving claims unchecked. Glasswing, pitched as a restraint consortium, smells like regulatory capture: AI firms self-regulate via friendly partners, dodging scrutiny while competitors scramble.

Broaden the lens: AI labs race for AGI amid U.S.-China tensions, with $100 billion+ invested since 2023 per Stanford’s AI Index. Overstated hacking prowess justifies delays, funding, and rules favoring incumbents. Open-source models like Llama 3 already fuzz basic binaries effectively; Claude’s edge, if real, demands proof. Absent it, Anthropic risks “boy who cried wolf” status, desensitizing stakeholders to genuine risks—like AI aiding nation-state ops, as seen in 2024’s Salt Typhoon hacks on U.S. telcos.

Implications cut deep. Investors pour billions into AI security startups (e.g., $500M for SentinelOne in 2024). Hype diverts capital from proven tools: endpoint detection ($50B market) outperforms unproven AI oracles. Regulators, eyeing EU AI Act enforcement, need facts, not brochures. Anthropic could rebuild trust by releasing CVE tables, fuzz corpora, and third-party audits. Until then, Mythos Preview signals collapsing verification standards in AI safety. Proceed with skepticism—security demands evidence, not previews.