AI language models now generate proofs of lemmas that experts consider routine but involved. ChatGPT 5.2 Pro, released in December 2025, handles these reliably enough for research use, though errors persist. This marks a leap from early failures: in July 2020, GPT-3 via AI Dungeon refused basic arithmetic. By April 2022, it produced its first correct proof of Fermat’s Little Theorem. The author, a mathematician tracking this progress, initially doubted near-term research utility. Recent models like o3-mini-high, assessed in February 2025, crossed a “genuine usefulness” threshold despite frequent mistakes.

Timeline of Progress

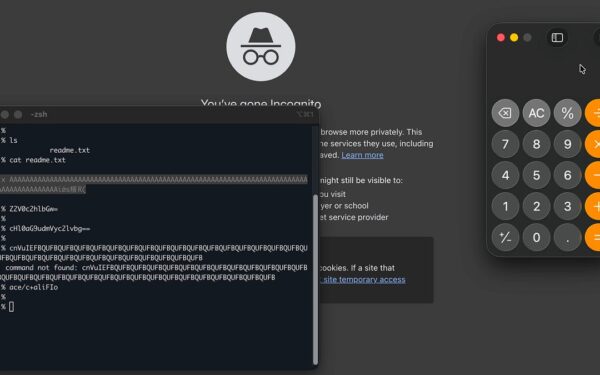

Progress accelerated with reasoning-focused models. The author used OpenAI’s Codex agent for scientific computing tasks previously deemed impractical. Publicly, they balanced praise with caution, highlighting “slop” papers on arXiv—incorrect math hard to spot amid AI-generated volume. Mathematics demands confusion, persistence, and error correction, not just truth-stating. LLMs bash against this edifice, imprinting partial intuitions, but human verification remains essential.

Early hype ignored these realities. Benchmarks overstate capabilities; real math involves navigating vast, interconnected structures. The Library of Babel analogy fits: an infinite repository holds every proof, but discerning valid ones requires human insight. AI floods the library faster, amplifying noise.

Revised Expectations and a Bet

Predictions shifted. In early 2024, the author forecasted autonomous AI math rivaling top humans by 2040, with minor conjecture proofs by 2026 and major ones soon after. Serious hurdles seemed likely before 2030. By March 2025, they bet Tamay Besiroglu, cofounder of Mechanize, that AI tools would not autonomously produce papers matching the best few from 2025—at comparable cost to human experts—within a set timeframe. Details on the bet’s horizon remain unspecified, but it underscores skepticism.

Model improvements outpaced expectations. o3-mini-high’s February 2025 debut enabled lemma proofs; ChatGPT 5.2 Pro scaled to expert routine work. Codex handles compute-heavy tasks. Yet errors abound, demanding scrutiny.

Implications for Research

This matters because math underpins cryptography, finance, and security—fields where flaws cascade. AI-generated proofs risk embedding errors in protocols or models. ArXiv’s slop already pollutes the record; undetected mistakes could mislead for years. Humans must verify, but scale strains reviewers.

Economically, AI cuts research costs. Routine lemmas, once hours for experts, now minutes—flawed or not. Top conjectures like Riemann Hypothesis equivalents stay distant; they demand novel insight, not pattern-matching. AI excels at recombination, falters on breakthroughs.

Why care? Reliable math secures blockchains, prices derivatives, detects intrusions. Hype blinds to verification costs. The author pushes present focus: use AI as a tool, not oracle. Autonomous superhuman math by 2030? Possible, but bet against it until proven. Track costs, error rates, and conjecture solves. For now, cling to the edifice yourself—AI imprints foreheads, but you interpret the bruise.