Cloudflare runs its internal AI engineering stack on the products it sells: AI Gateway, Workers AI, and others. In the last 30 days, 3,683 employees—93% of its R&D org and 60% company-wide—used these tools. They handled 47.95 million AI requests, routed 20.18 million through AI Gateway, and processed 241.37 billion tokens there, with 51.83 billion on Workers AI.

Developers merged code faster than ever. The four-week rolling average of merge requests jumped from 5,600 per week to over 8,700. One week in March hit 10,952—nearly double the Q4 baseline. Across 295 teams, this shows AI tools boosted velocity without obvious downsides so far.

The Build Process

Eleven months ago, Cloudflare formed the iMARS tiger team to integrate AI deeply. They started with MCP servers for agentic workflows, then expanded to access layers and tooling. The Dev Productivity team now owns it, alongside CI/CD and builds.

They dogfooded their stack from day one. No separate internal infra—everything uses customer-facing products. This forced reliability improvements that benefit paying users. During Agents Week, they scaled Workflows 10x and launched Sandbox SDK to general availability.

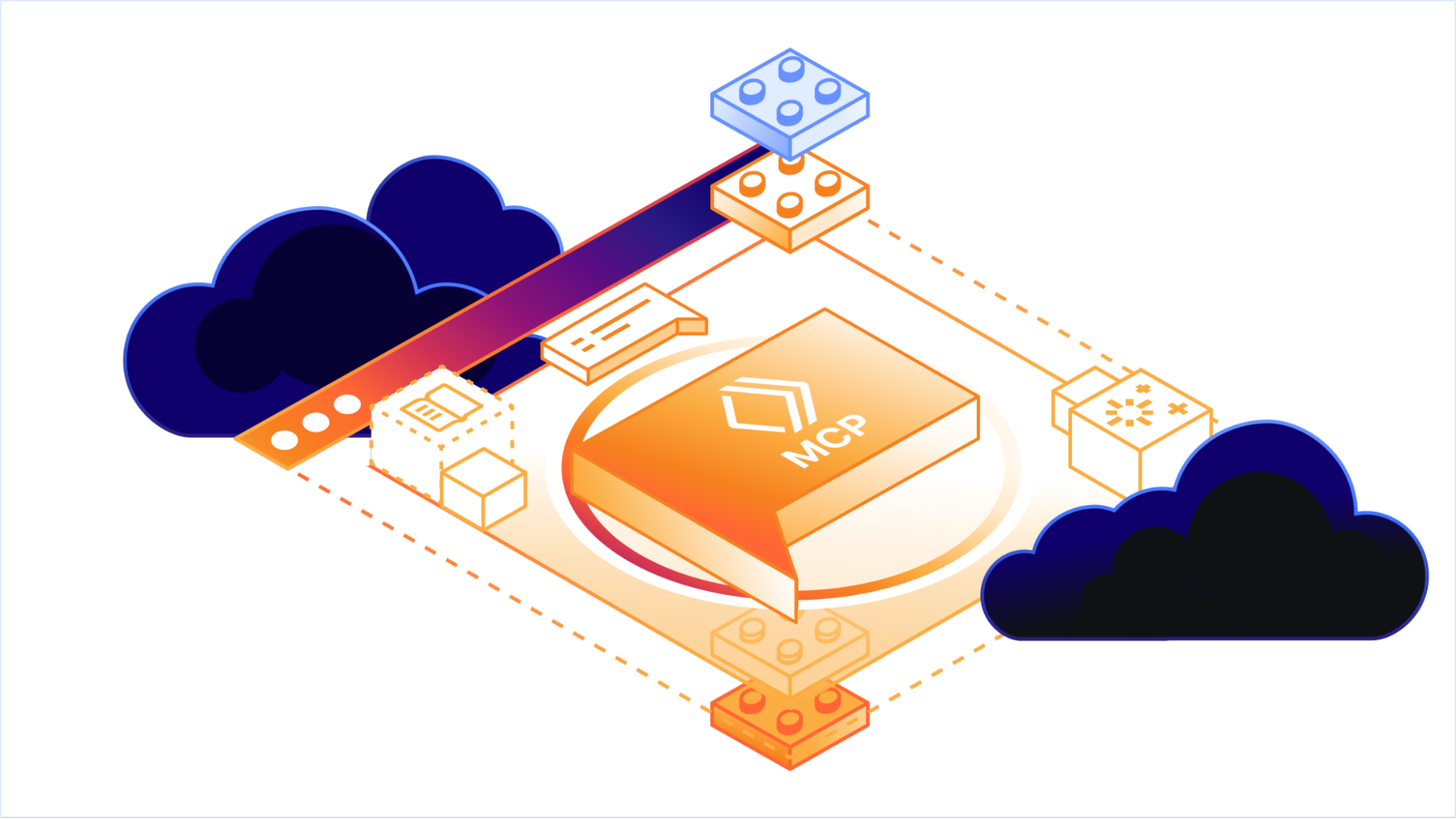

Layer by Layer

Engineers access tools like OpenCode and Windsurf via Zero Trust with Cloudflare Access. AI Gateway handles LLM routing, cost tracking, bring-your-own-key (BYOK), and zero data retention.

Inference runs on Workers AI with open-weight models. The MCP Server Portal uses Workers plus Access for single OAuth. AI Code Reviewer integrates with CI via Workers and AI Gateway.

For safety, agent code executes in sandboxes using Dynamic Workers. Stateful agents leverage the Agents SDK with McpAgent and Durable Objects. Workflows manage multi-step processes. A 16,000+ entity knowledge graph in Backstage (open-source) provides context.

This setup clones, builds, and tests in isolated environments. No custom servers; all scales on Cloudflare’s edge network.

Implications for Users

Dogfooding at this scale validates the platform. Cloudflare processed billions of tokens internally without building shadow infra, proving Workers AI and Gateway handle production loads. Users get battle-tested features: zero-retention routing reduces data risks, BYOK fits compliance needs.

But skepticism is warranted. Merge request spikes sound great, yet correlation isn’t causation. Did AI reduce bugs or just speed sloppy commits? Cloudflare doesn’t share code quality metrics here—watch for regressions. Also, 241 billion tokens imply real costs; even at $0.10 per million input/output, that’s thousands monthly, offset by their margins.

For developers elsewhere, this blueprint matters. Replicate it on Vercel, Fly.io, or AWS? Cloudflare’s edge inference cuts latency, vital for real-time agents. Open-weight models on Workers AI sidestep proprietary LLM lock-in.

Security angle: Zero Trust and sandboxes limit blast radius from rogue agents. In crypto/DeFi, where exploits cost millions, this isolation appeals. Finance teams could adapt for compliant analysis pipelines.

Bottom line: Cloudflare eats its own cooking at enterprise scale. It accelerates their roadmap—enhancements from internal pain ship weekly. If you’re building AI agents, study this stack. It works, but measure your own velocity gains rigorously.