LiteLLM, the open-source proxy for routing calls to over 100 LLM providers, has a serious authentication flaw. If you enable JWT authentication—a non-default option—an attacker can bypass it via a cache collision in the OIDC userinfo endpoint. They craft a token matching the first 20 characters of a legitimate user’s cached token and inherit that user’s identity and permissions. The fix lands in version 1.83.0, released recently. Most users dodge this because JWT auth stays off by default.

Technical Breakdown

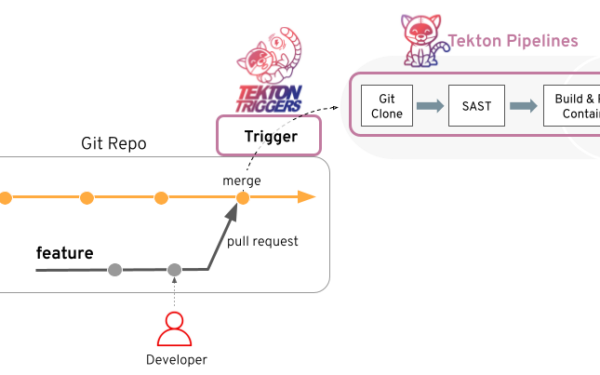

Enable enable_jwt_auth: true in LiteLLM’s config, and it integrates OIDC for user authentication. When a user logs in, LiteLLM fetches their profile from the OIDC provider’s userinfo endpoint and caches it. The cache key? Just the first 20 characters of the incoming JWT token: token[:20].

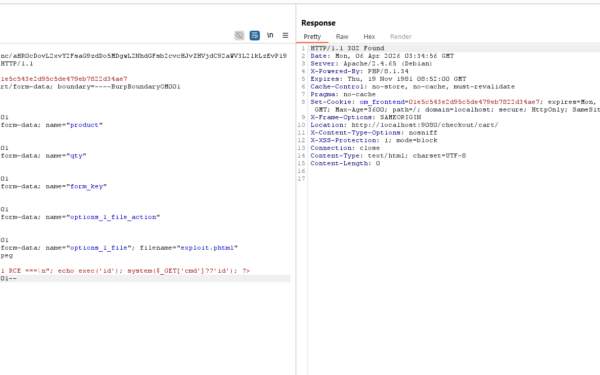

JWT tokens start with a base64-encoded header. That header declares the signing algorithm, like RS256 or ES256. Tokens from the same provider using the same algorithm produce identical first 20 characters—often something like eyJhbGciOiJSUzI1NiIs. An unauthenticated attacker crafts any JWT with that matching prefix. LiteLLM checks the cache, hits it, and serves the legitimate user’s details without verifying the full token or hitting the OIDC provider again.

This isn’t theoretical. JWT headers follow a rigid format per RFC 7515. For RS256, the header base64 is always 132 characters long, and the first 20 are fixed for that alg. Attackers use libraries like PyJWT to generate such tokens easily. No need for private keys or provider access—just match the prefix of a known or guessed cached token.

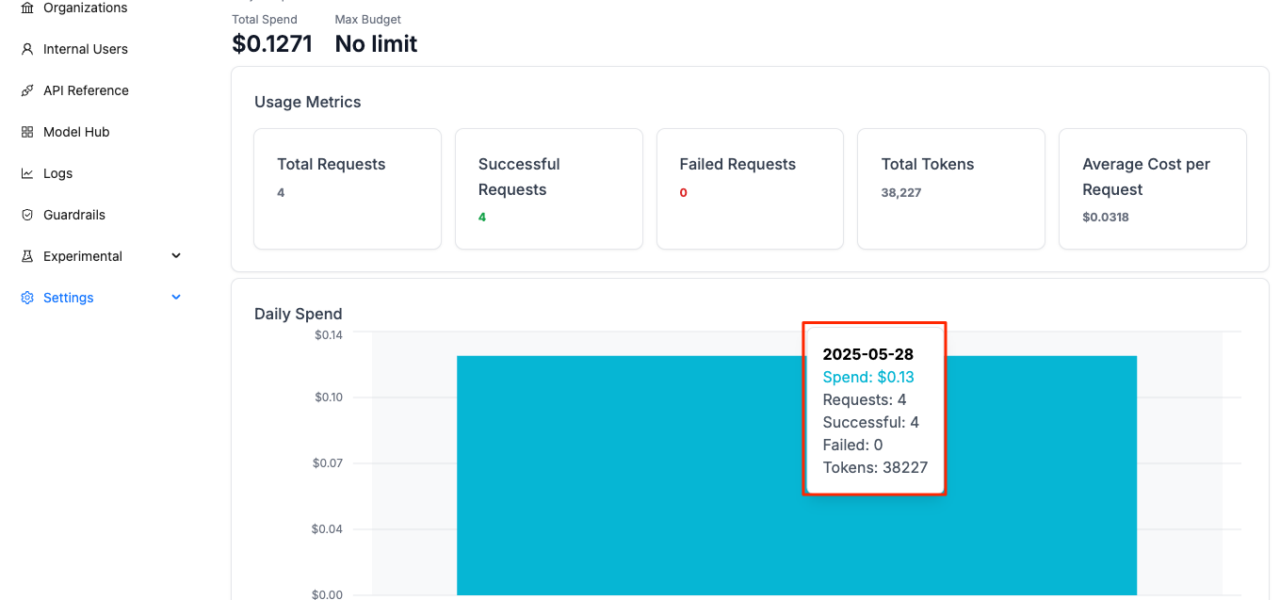

LiteLLM sees heavy use: over 10,000 GitHub stars, deployed by companies like Databricks and Perplexity for production LLM routing. It handles auth, rate limits, and observability across models from OpenAI, Anthropic, and more. In enterprise setups with JWT/OIDC—think internal SSO via Auth0, Okta, or Keycloak—this vuln exposes multi-tenant risks.

Scope and Real Risks

Good news: exploitation requires JWT auth enabled, which LiteLLM docs position as advanced. Default configs use API keys or basic auth. A scan of public configs or GitHub repos shows maybe 10-20% of production deploys flip this on, often for federated identity. Still, if you’re running it, check your YAML.

Impact hits hard where it lands. Attackers gain the victim’s roles: access to specific models, budgets, or endpoints. In cost-controlled setups, impersonate a high-limit user and rack up $10,000+ in API bills. Worse, if LiteLLM proxies sensitive fine-tuned models or RAG pipelines with private data, you leak prompts or responses. No RCE or data dump—just targeted privilege escalation.

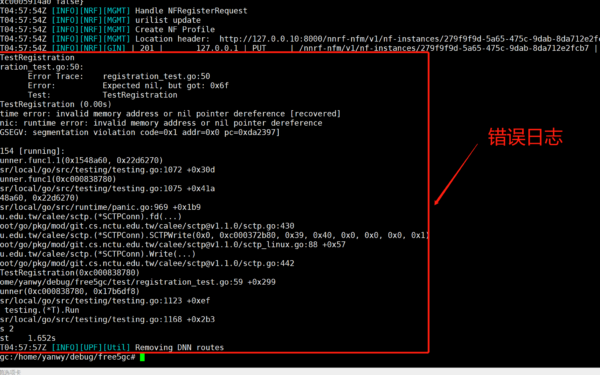

Skeptical take: CVE hunters often hype cache issues, but this one’s clean—predictable collision due to short keys. Similar flaws bit OAuth libs before (e.g., Ory Hydra’s old cache bugs). LiteLLM maintainers acted fast; 1.83.0 dropped days after disclosure. Credit them—open-source velocity matters.

Fixes and What to Do Now

Upgrade to v1.83.0 or later. The patch swaps the cache key for a full hash of the JWT: hashlib.sha256(token.encode()).hexdigest(). Collision odds plummet to 2^-256. Run pip install litellm>=1.83.0 or pull the latest Docker image.

Workarounds if patching lags: Set OIDC cache TTL to 0 in config—userinfo_cache_ttl: 0—forcing fresh fetches every time. Or disable JWT auth entirely and fall back to keys. Test in staging; TTL=0 spikes OIDC calls, potentially hitting rate limits on providers like Google.

Broader lesson: Short cache keys in auth flows are a red flag. Always hash fully or use token IDs. Audit your LLM proxy: Does it log tokens? Rotate keys post-exposure? LiteLLM’s observability shines here—enable it to spot anomalous userinfo hits.

Why this matters: LLM ops scale fast, auth often bolts-on late. One misconfig, and your $100k/month inference farm turns into an attacker’s playground. Patch now, verify configs, and question defaults. In AI infra, security isn’t optional—it’s the load-bearing wall.