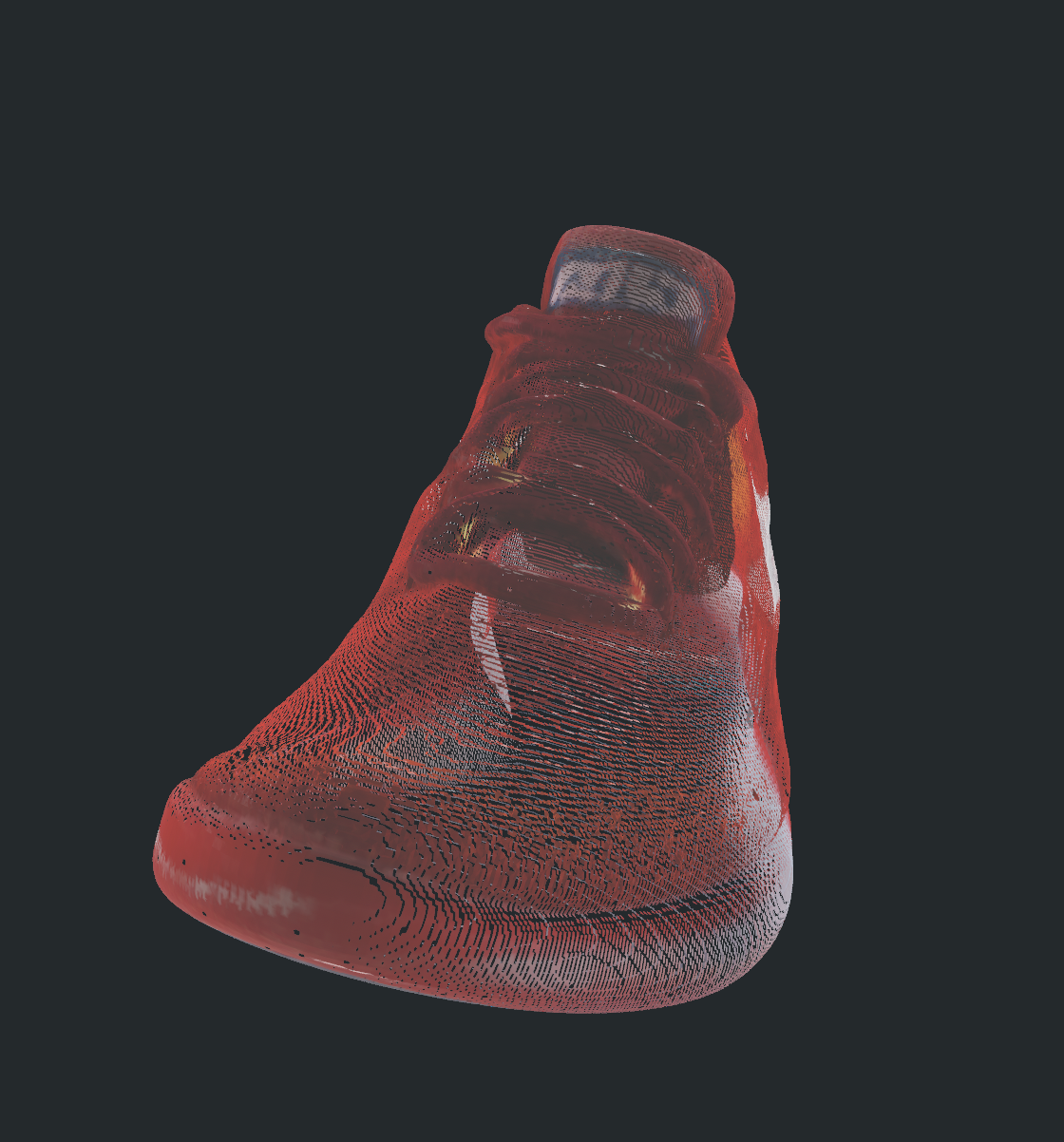

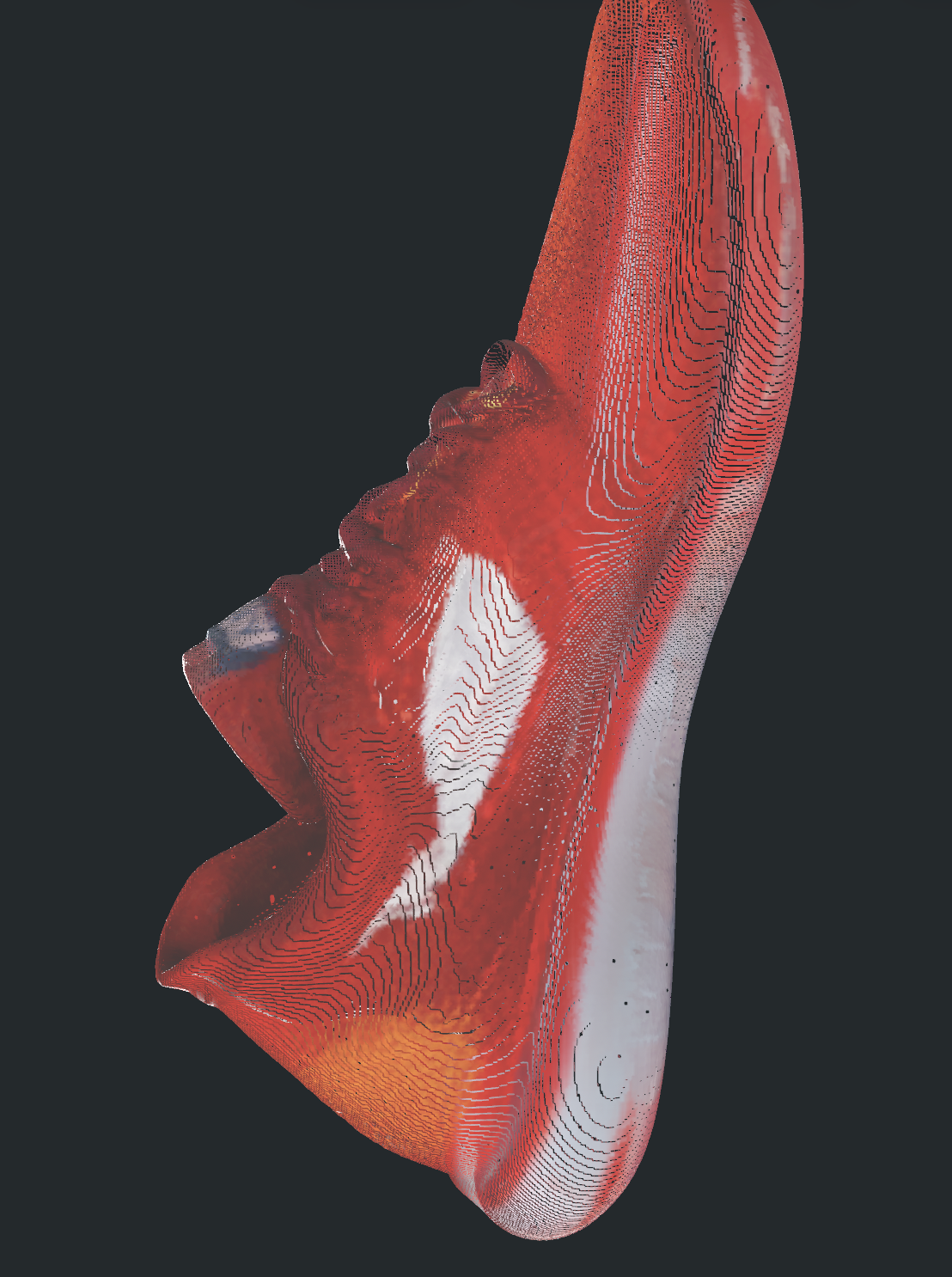

Shivam Kumar ported Microsoft’s TRELLIS.2, a 4-billion-parameter model for generating 3D meshes from single images, to run natively on Apple Silicon. This eliminates the need for NVIDIA CUDA hardware, cloud services, or specialized dependencies. On an M4 Pro with 24GB unified memory, it produces meshes with around 400,000 vertices in 3.5 minutes—slower than the seconds it takes on an H100 GPU, but fully offline and local.

TRELLIS.2 comes from Microsoft Research, building on sparse transformer architectures for efficient 3D reconstruction. Released in late 2024, it handles complex geometry from casual photos, outperforming earlier models like InstantMesh or SV3D in detail and speed on high-end GPUs. The catch: it demands CUDA, flash-attention for sparse ops, nvdiffrast for rasterization, and custom sparse convolutions—all NVIDIA-locked. Macs with M-series chips couldn’t touch it without heroic engineering.

The Port: Pure PyTorch Swaps

Kumar swapped out the CUDA roadblocks with vanilla PyTorch equivalents. He implemented a gather-scatter mechanism for sparse 3D convolutions, used Scaled Dot-Product Attention (SDPA) for sparse transformers, and rewrote mesh extraction in pure Python, ditching CUDA hashmaps. These changes span just a few hundred lines across nine files in the trellis-mac repo.

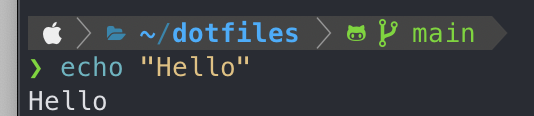

Installation is straightforward for PyTorch users on macOS. Clone the repo, install dependencies via pip—including torch with MPS support—and download the 4B model weights (about 8GB quantized). Run inference with a single image:

python generate.py --input_image path/to/photo.jpg --output_dir ./outputs

This leverages Apple’s Metal Performance Shaders (MPS) backend in PyTorch 2.5+, which maps tensor ops to the GPU efficiently. No Docker hacks or remote servers required. Early tests show mesh quality holds up visually, though subtle artifacts might appear from PyTorch approximations versus optimized CUDA kernels.

Performance Breakdown

On the M4 Pro (14-core CPU, 20-core GPU, 24GB RAM), full pipeline—from image encoding to mesh export—clocks 3.5 minutes for a 400K-vertex output. Peak memory usage hovers at 20GB, fitting snugly in higher-end MacBooks or minis. Compare to H100: under 30 seconds with 80GB HBM3, thanks to tensor cores and custom kernels.

Lower-spec machines struggle. An M3 Air with 16GB tops out at smaller meshes or crashes on VRAM limits. Inference scales linearly with batch size, but single-image use dominates. Quantization to 4-bit further shaves time to ~2.5 minutes without visible quality drop, per repo benchmarks.

Skepticism check: Ports like this can introduce numerical instability. TRELLIS.2’s sparse ops are finicky; PyTorch’s SDPA might lag in sparse efficiency, explaining the 100x slowdown. No public ablation studies yet—run your own A/B tests on the repo.

Why This Matters

Image-to-3D tech unlocks product viz, AR/VR assets, and custom printing from phone snaps. Before, you needed AWS/GCP bills ($2-5/hour for A100s) or waited for queues. Now, anyone with a 2024 MacBook Pro iterates privately, no data exfil to OpenAI or Replicate.

Privacy wins big: Generate sensitive prototypes—say, hardware designs or crypto wallet mockups—without uploading to black-box APIs. Security angle: Offline ML dodges supply-chain risks in cloud models. Cost? Amortizes at $0 after hardware buy-in; a 24GB M4 Mac runs $2,500, pays off in months for pros.

Broader ripple: Accelerates open-source 3D on consumer silicon. Expect forks for M-series diffusion models or Llama fine-tunes. Limits persist—no multi-view support yet, and topology can glitch on occluded scenes. Still, this cracks the NVIDIA moat, proving PyTorch MPS maturity for 4B-scale beasts. Grab the repo; test it yourself before hype swells.