Fleet, the open-source osquery-based device management platform, has a critical denial-of-service flaw in its gRPC Launcher endpoint. Any authenticated host—armed with a valid Launcher node key—can crash the entire Fleet server process by sending an unexpected log type value. The server doesn’t recover; it terminates instantly. This disrupts every connected host, ongoing MDM enrollments, and API access across the board.

This vulnerability, tracked as a medium-severity issue in Fleet v4 (github.com/fleetdm/fleet/v4), stems from poor error handling in the gRPC server. Instead of rejecting malformed input gracefully, the process panics and dies. An attacker scripts this with repeated requests, keeping the server down indefinitely until you deploy a patch. Fleet disclosed it responsibly, crediting fuzzing expert @fuzzztf for the find.

Why Fleet Users Should Act Now

Fleet manages endpoints at scale—think security teams tracking thousands of laptops, servers, and IoT devices via osquery. It’s integral for compliance, threat hunting, and MDM in enterprises. A single compromised host flips the script: that endpoint’s Launcher agent, authenticated via its node key, DoS-es your entire control plane. No queries run, no policies enforce, no alerts flow.

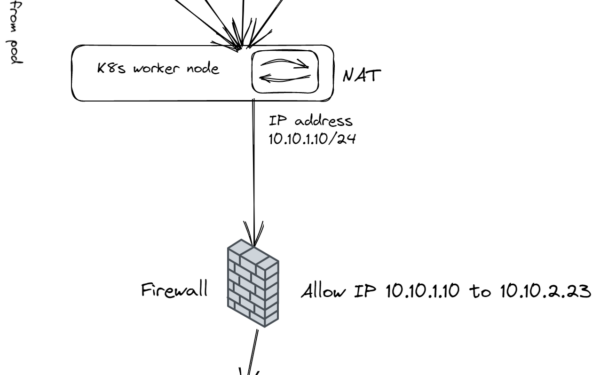

Node keys are long-lived secrets generated per host during enrollment. Compromise one—via malware, insider access, or weak key hygiene—and you own the outage. In 2023, Fleet powers setups at firms like Shopify and Cisco; downtime here isn’t just inconvenient. It blinds your SOC during incidents, delays patches, and exposes unmonitored fleets to threats. We’ve seen similar gRPC panics in other tools like Envoy proxies, but Fleet’s full-process crash amplifies the pain—no restarts or circuit breakers mitigate it.

Real-world context: Osquery’s ecosystem thrives on trust in the management layer. This vuln erodes that. Attackers could chain it with host compromise vectors like unpatched osquery agents or phishing-delivered payloads. Fleet’s 10,000+ GitHub stars and active Slack community (#fleet channel) mean widespread exposure. If you’re on an unpatched v4 server, assume adversaries probe—fuzzers like @fuzzztf prove it’s low-effort to weaponize.

Technical Breakdown and Fix

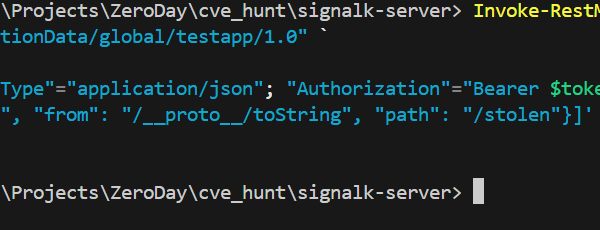

The bug hits the Launcher endpoint, where agents stream logs to the server over gRPC. Sending an invalid enum value for log type triggers an unhandled case, likely a switch statement omission or panic on invalid protobuf. Server logs would show a stack trace before exit—check your fleet-server.log for clues like “unexpected log type” or Go runtime panics.

No workarounds exist. Isolate? Node keys are per-host, and auth is required, so firewalling helps little post-enrollment. Rotate all keys? Impractical at scale without downtime. Upgrade immediately to the patched release—Fleet pushes fixes fast via GitHub releases and Docker images. Verify your version:

$ fleet-version

Post-upgrade, audit node keys: script their rotation using Fleet’s CLI (fleetctl enroll rekeys). Monitor gRPC traffic with tools like grpcurl or Wireshark for anomalies. Enable server restarts via systemd or Docker healthchecks to buy time, though this doesn’t stop persistent attacks.

Fleet’s response is solid—transparent advisory, quick patch, Slack support. But it highlights a pattern: gRPC’s protobuf strictness demands exhaustive enum handling. Devs, fuzz your APIs with go-fuzz or ProtobufFuzzer. Users, treat management planes as high-value targets. Patch now; this matters because one endpoint shouldn’t kill your visibility.

Contact security@fleetdm.com for details or join osquery Slack. Stay sharp—Fleet’s great, but no tool’s unbreakable.