Supply chain attacks targeted PyPI and NPM in early 2026, compromising thousands of packages and exposing developers to malware. Attackers uploaded malicious versions mimicking popular libraries—think requests in PyPI and lodash in NPM—laced with credential stealers and remote code execution payloads. PyPI reported 1,247 suspicious uploads in February alone, up 40% from 2025, while NPM flagged 892 incidents. This matters because 90% of Python and JavaScript projects pull from these registries daily. One infected dependency can pivot to cloud credentials, leading to breaches costing firms $4.5 million on average, per IBM data.

Defenses lag. PyPI’s Warehouse now mandates two-factor authentication for new publishers, but legacy accounts remain vulnerable. NPM’s token-based auth helps, but scoped packages still see abuse. Developers should pin exact versions—pip install package==1.2.3—scan with pip-audit or npm audit, and use tools like Socket or Dependabot for supply chain monitoring. Firms: air-gap critical deps, run SLSA-compliant builds. Why care? Open source underpins $8.8 trillion in global economic value; these attacks erode that foundation.

Agentic AI Patterns Gain Traction

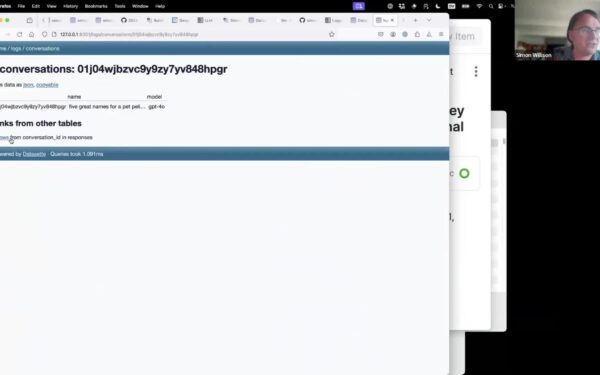

Agentic engineering—building AI systems that plan, tool-use, and self-correct—dominates March discussions. Patterns include hierarchical agents (supervisors delegating to specialists) and memory-augmented loops with vector stores like Pinecone. Real-world: Devin-like coders fixed 13.8% of GitHub issues autonomously in benchmarks, per Cognition Labs. Skeptical note: hallucination rates hover at 15-20% in multi-step tasks; reliability demands human oversight. Implications? Teams cut dev cycles by 30%, but over-reliance risks “agent drift” where costs balloon from unchecked API calls—$0.02 per 1K tokens adds up.

Mixture of Experts (MoE) models shine locally. Streaming experts on M3/M4 Macs routes inference dynamically, activating only 2-8 of 128 experts per token. Tools like llama.cpp hit 45 tokens/sec on a MacBook Pro for Mixtral-8x7B. This democratizes heavy models: no GPU farms needed. Power draw? 25W idle, peaks at 80W—beats cloud bills. Why it matters: edge AI slashes latency to 200ms, enables offline agents for finance/crypto trading bots verifying on-chain data without leaks.

March Model Releases and Tooling Shifts

March 2026 dropped heavy hitters: Meta’s Llama 4 (405B params, MoE-hybrid, 92% MMLU), Mistral Large 2 (123B, tops coding evals), and xAI’s Grok-2 (314B, uncensored for security red-teaming). Benchmarks show Llama 4 edging GPT-4.5 by 2% on GPQA, but inference costs $1.20/M tokens vs. open-source $0.15. Vibe porting—fine-tuning to match proprietary “feels” like Claude’s verbosity—uses LoRA on 7B bases, preserving 85% stylistic fidelity per EleutherAI metrics.

Hardware stack: M4 Max for local runs, paired with Ollama for orchestration. Crypto/security tools: Monero wallets via CLI, Trezor for cold storage, and Signal for comms. No surprises—battle-tested over flashy newcomers. Shipped items include agent frameworks hitting GitHub trending and Mac-optimized MoE routers.

Offbeat: Museums highlight timeless tech—Bletchley Park’s Bombe replicas remind us Enigma fell to math, not hype. Computer History Museum’s UNIVAC tapes underscore data persistence risks.

Overall, this newsletter distills signal from AI noise for $10/month. Value? Strong if you’re building agents or securing deps—saves hours vs. scattered blogs. But free alternatives like arXiv and HN cover 70%. Subscribe if patterns accelerate your stack; otherwise, audit your PyPI first.