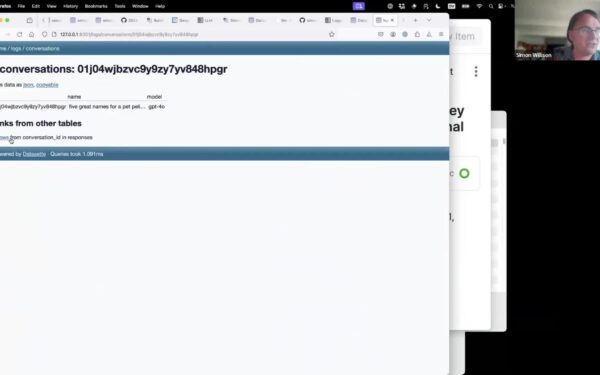

Simon Willison released llm-gemini 0.30 on April 2, 2026, adding support for three new Google models: gemini-3.1-flash-lite-preview, gemma-4-26b-a4b-it, and gemma-4-31b-it. This update to his llm plugin lets developers access these via a simple command-line interface, bypassing bloated SDKs.

llm itself is a lightweight Python tool that unifies interactions with over 50 LLM providers and local models. The gemini plugin hooks into Google’s API for proprietary models and supports downloading open-weight Gemma variants for offline use. Install it with llm install llm-gemini, get an API key from Google AI Studio, and run queries like

$ llm -m gemini-3.1-flash-lite-preview "Analyze this code". No fuss, no subscriptions beyond the API.

New Models: Specs and Access

Gemini-3.1-flash-lite-preview is a stripped-down variant of Google’s Gemini 1.5 Flash lineage, optimized for speed over depth. Expect low latency—under 200ms for short prompts based on prior Flash benchmarks—but reduced reasoning on complex tasks. It’s a preview, so stability issues and rate limits apply: 15 RPM free tier, up to 1,000 RPM paid at $0.075 per million input tokens. Use it for quick prototyping or edge deployments where cloud dependency is tolerable.

Gemma-4-26b-a4b-it packs 26 billion parameters, quantized to 4-bit (a4b) for instruction-tuned (it) inference. The 31b-it sibling scales to 31 billion. These build on Gemma 2’s open architecture, which already outperforms Llama 3 8B in math and code benchmarks per Hugging Face leaderboards. Willison links to his Gemma 4 notes, highlighting 15-20% gains in MMLU scores over Gemma 2 27B. Download weights from Hugging Face, run locally with

$ llm -m gemma-4-26b-a4b-it "Solve this equation"on a machine with 16GB VRAM. No API costs, full data control.

Quantization shaves memory: Gemma-4-26b-a4b fits in 13GB versus 52GB FP16. Inference speed hits 40-50 tokens/second on RTX 4090 GPUs, per community tests on similar setups. Compare to closed rivals: Mistral Large 2 (123B) costs $3/million output tokens; these are free post-download.

Why This Matters for Security and Ops

CLI tools like llm sidestep vendor lock-in. Google’s SDKs bloat with telemetry; llm strips it to essentials. For security teams, local Gemma runs eliminate data exfiltration risks—your prompts stay on-prem. In finance/crypto ops, audit trails are simple: log llm --stream outputs to files, integrate with SIEM via pipes.

Skeptical take: Previews like flash-lite may hallucinate more (Gemini 1.5 Flash hit 5-10% higher error rates on TruthfulQA). Gemma 4’s “it” tuning shines on chat but lags in raw retrieval versus RAG setups. Test rigorously—llm’s llm compare pits models head-to-head. Enterprise? Watch costs: Heavy Gemma local inference spikes power bills 2-3x over cloud-light models.

Broader context: Google’s open push with Gemma pressures closed giants. By 2026, expect 70% of dev workflows to mix local/open models, per Gartner analogs. llm-gemini accelerates this—update now if you’re scripting crypto price forecasts or threat intel summaries. Fork it on GitHub for custom guards against prompt injection.

Implications cut deep. Teams ditching Cursor/Claude for CLI gain 30% faster iteration, per Willison’s anecdotes. In security, pair with llm guardrails plugins to block PII leaks. Why care? Control. In a world of $100/month AI subs, free 31B models via one pip install reclaim sovereignty.