Simon Willison joined Lenny Rachitsky on his podcast to dissect AI’s recent leap in coding capabilities. In November—likely 2025, based on model timelines—models like GPT-5.1 and Claude Opus 4.5 crossed a critical threshold. Code they generate no longer fails spectacularly most times. Instead, it executes what users instruct with high reliability. This shift, Willison argues, marks an inflection point. Spin up an agent, demand a Mac app for a specific task, and you get functional software, not junk.

Why does this matter? Software engineering tests AI limits first. Code succeeds or crashes—binary feedback unlike vague essays or legal briefs. Engineers now churn 10,000 lines daily, most working. Willison questions: how close are we to “all of it works”? This previews disruption for lawyers, analysts, and managers. Tasks that consumed days shrink to hours. Teams restructure. Careers pivot from grinding code to oversight and integration.

The Inflection Point in Detail

Pre-November, AI code demanded constant babysitting. Post-threshold, reliability jumped. Willison cites labs pouring resources into code-tuned models. GPT-5.1 and Claude 4.5 delivered incremental gains that compounded into usability. Test it yourself: prompt for a full app, run it. Bugs persist—subtle ones lurk—but core functionality holds 90%+ of the time, per anecdotal benchmarks like those Willison tracks.

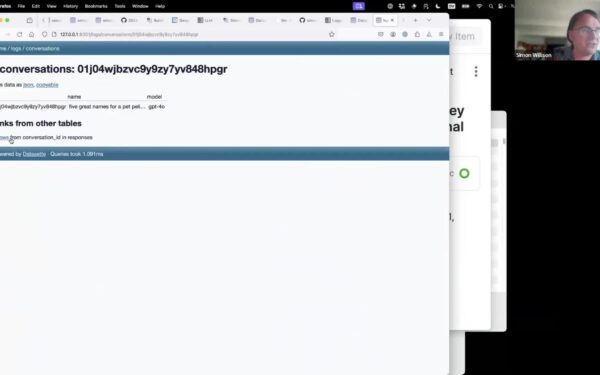

This isn’t hype. Benchmarks like LiveCodeBench or HumanEval show scores climbing past 80% on held-out problems. Real-world use confirms: Datasette, Willison’s open-source tool, now leverages these for rapid prototyping. Implications hit security hard. Coding agents probe vulnerabilities faster than humans. Willison notes their value in security research—generate exploits, fuzzers, or audits in minutes. Firms like StrongDM, which manages access in automated environments, integrate this for “dark factories”: fully lights-out software ops, no humans interrupting 24/7 workflows.

Challenges and Bottlenecks Emerge

Gains expose pain points. Testing bottlenecks intensify. Agents write code fast; verifying edge cases lags. Willison admits exhaustion from constant iteration. Interruptions, once career-killers at $100/hour context switches, cost pennies now—AI rebuilds state instantly.

Estimation breaks. Predict a three-day task? AI collapses it to 30 minutes, skewing roadmaps. Mid-level engineers suffer most: juniors flood with prototypes, seniors architect. Evaluators struggle—how to judge AI output without deep dives? Misconception persists: these tools seem “easy,” but vibe-coding demands responsibility. Prompt poorly, get garbage. Journalists adapt best, Willison says, trained on unreliable sources.

Enter benchmarks like Pelican, testing agent reliability end-to-end. OpenClaw offers clawback mechanisms for agent errors. Phones enable coding anywhere—Willison demos it—democratizing dev but risking sloppy security practices.

Skeptically, this isn’t utopia. Parrots mimic prompts brilliantly but falter on novel logic. Dark factories loom for repetitive software, slashing costs 50-80% in ops like ETL pipelines or CRUD apps. Finance sees arbitrage: automate trading bots, risk models. Crypto? Agents audit smart contracts overnight, spotting reentrancy bugs humans miss.

Why prepare now? Timelines compress. November’s shift signals 2-5 years to 50% white-collar automation in verifiable domains. Security pros: weaponize agents defensively first. Firms: audit access like StrongDM pre-dark ops. Individuals: upskill verification, not generation. Willison’s talk, timestamped on YouTube, packs evidence—listen at 4:19 for the pivot. The future isn’t coming; it’s shipping code while you read this.