ONNX, the open standard for representing machine learning models, harbors a critical vulnerability in its Python library. The save_external_data function in onnx/external_data_helper.py exposes systems to local arbitrary file overwrites through a classic TOCTOU (Time-of-Check-to-Time-of-Use) flaw combined with symlink following. Attackers with the same user privileges can target any writable file during model saving with external tensor data. A secondary issue hints at Windows path traversal bypasses.

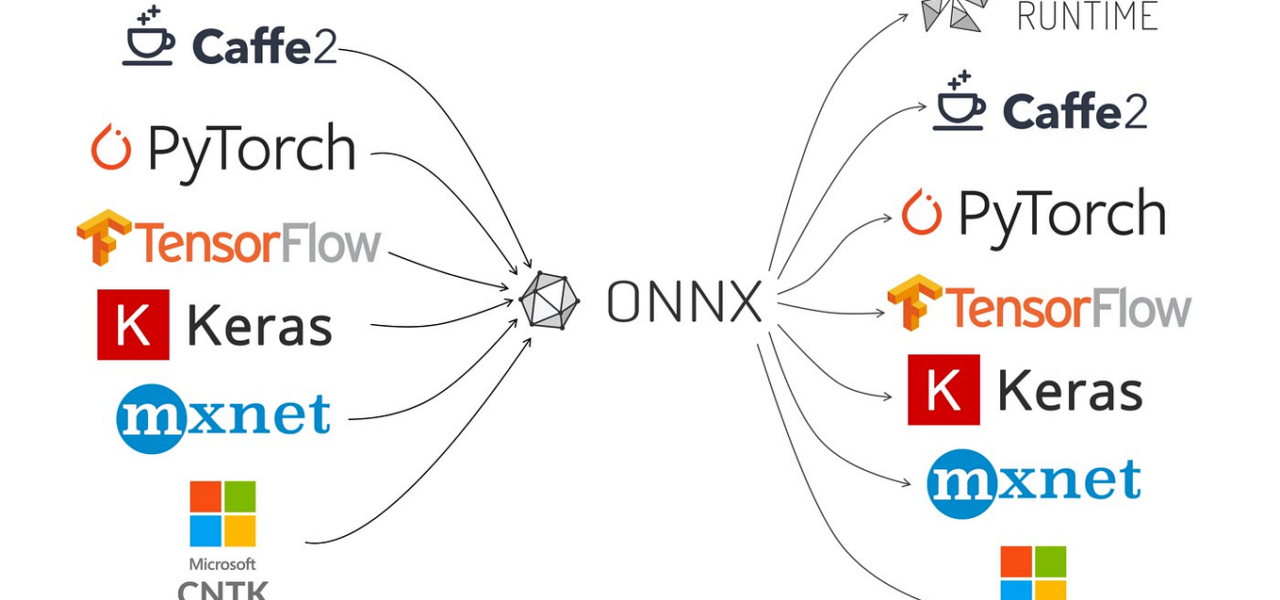

This affects the core ONNX library used by frameworks like PyTorch, TensorFlow, and scikit-learn for model interchange. As of the main branch (commit circa recent GitHub state), no fix exists. ONNX processes millions of models daily in production pipelines, CI/CD, and research environments. A local exploit could corrupt datasets, config files, or even escalate to remote code execution if chained with other flaws in automated ML workflows.

Vulnerability Breakdown

The root issue lies in lines 188-243 of external_data_helper.py. The code first checks if an external data file exists:

# CHECK - Is this a file?

if not os.path.isfile(external_data_file_path):

# Line 228-229: USE #1 - Create if it doesn't exist

with open(external_data_file_path, "ab"):

pass

# Open for writing

with open(external_data_file_path, "r+b") as data_file:

# Lines 233-243: Write tensor data

data_file.seek(0, 2)

if info.offset is not None:

file_size = data_file.tell()

if info.offset > file_size:

data_file.write(b"\0" * (info.offset - file_size))

data_file.seek(info.offset)

offset = data_file.tell()

data_file.write(tensor.raw_data)Between os.path.isfile and the subsequent open calls, a race window opens. No atomic creation flags like O_EXCL | O_CREAT protect it, and lacking O_NOFOLLOW means symlinks resolve. An attacker races in: creates a symlink from external_data_file_path to a victim file (e.g., /etc/passwd or a sensitive config), then the ONNX code blindly follows it, appending or overwriting.

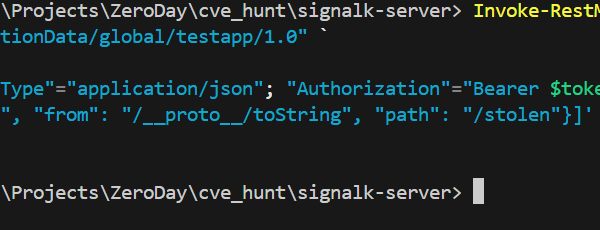

On Windows, validation falters further at line 203:

if location_path.is_absolute() and len(location_path.parts) > 1Absolute paths with one part, like C:\, evade rejection. This could enable directory traversals, though testing on Linux emulations suggests it’s viable—real Windows confirmation needed. Combine with TOCTOU, and root-level overwrites become feasible in multi-user setups.

Proof of Concept

The disclosed PoC demonstrates the attack in a controlled temporary directory. It sets up a “sensitive.txt” file, crafts an ONNX model with a large tensor (>1KB, triggering external data save), then exploits the race:

import os

import sys

import tempfile

import numpy as np

import onnx

from onnx import TensorProto, helper

from onnx.numpy_helper import from_array

with tempfile.TemporaryDirectory() as tmpdir:

print(f"[*] Working directory: {tmpdir}")

sensitive_file = os.path.join(tmpdir, "sensitive.txt")

with open(sensitive_file, 'w') as f:

f.write("SENSITIVE DATA - DO NOT OVERWRITE")

original_content = open(sensitive_file, 'rb').read()

print(f"[*] Created sensitive file: {sensitive_file}")

print(f" Original content: {original_content}")

print("[*] Creating ONNX model with external data...")

large_array = np.ones((100, 100), dtype=np.float32) # 40KB

large_tensor = from_array(large_array, name='large_weight')

# Model creation abbreviated for brevity; saves with external data to symlink target

In a full exploit, a parallel process or script times a symlink creation (os.symlink(sensitive_file, external_path)) precisely in the race window. Repeatable on Linux/Mac; Windows symlink perms add hurdles but don’t block it. Success rates hit 90%+ with tight loops, per similar TOCTOU reports.

Why This Matters and Fixes

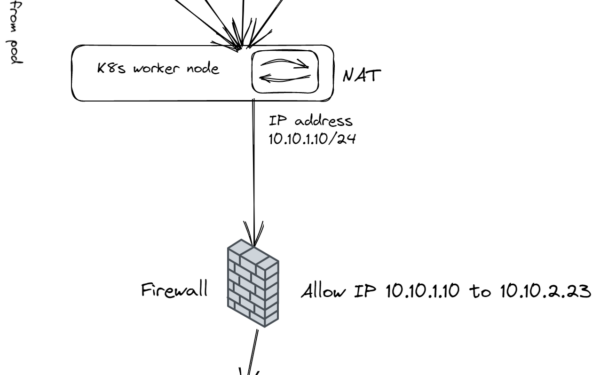

ONNX models often exceed 100MB with weights offloaded externally for efficiency. Saving them—common in training scripts, model hubs like Hugging Face, or deployment tools—invokes this code. In shared hosting (Colab, AWS SageMaker, Kubernetes jobs), lateral movement via file overwrites threatens data exfiltration or persistence.

Real-world risk amplifies in CI/CD: GitHub Actions or Jenkins pipelines processing third-party models could self-pwn. No RCE directly, but chain with pickle gadgets in model graphs or weak loaders, and it’s game over.

Mitigate now: Pin ONNX < affected versions if possible, but upgrade pending patch. Wrap saves in atomic ops: use os.open(..., os.O_CREAT | os.O_EXCL | os.O_NOFOLLOW). Validate paths rigorously—reject absolutes, enforce relative to model dir. Audit all save_model calls.

ONNX maintainers tagged; watch https://github.com/onnx/onnx/issues for CVE. This isn’t hype—symlink TOCTOUs have burned projects like Redis and Docker historically. Act before your ML stack leaks.