Kedro, the open-source Python framework for building data pipelines, ships with a path traversal vulnerability in its versioned dataset loading. Attackers can exploit unsanitized version strings to read arbitrary files outside the intended directory. QuantumBlack, the maintainers, patched it in version 1.3.0, released recently. If you run Kedro pipelines with user-influenced version tags, upgrade immediately to avoid data leaks or poisoning.

This flaw hits the _get_versioned_path() function in kedro/io/core.py. It blindly joins user-supplied version strings into filesystem paths without checking for traversal sequences like ../. Kedro treats versions as subdirectories under a dataset’s root, so a malicious version like ../../etc/passwd escapes that jail, letting pipelines load sensitive files instead of expected data.

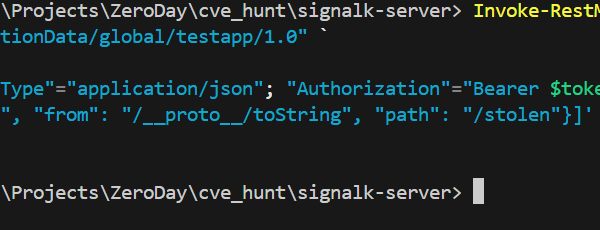

Exploitation reaches through three main vectors. First, the catalog.load(..., version="malicious") call in Python code. Second, DataCatalog.from_config(..., load_versions={"dataset": "malicious"}). Third, the CLI with

$ kedro run --load-versions=dataset:../../secrets.yaml. In shared environments—think multi-tenant Jupyter hubs or CI/CD pipelines—an attacker controlling a version tag reads configs, API keys, or other tenants’ datasets.

Real-World Risks

Why does this matter? Data science pipelines process massive volumes of sensitive info: financial records, health data, proprietary models. Kedro users, often at banks, pharma firms, or consultancies like McKinsey’s QuantumBlack, run these in production. A traversal exploit poisons a model with fake data or exfils secrets during a routine kedro run. In one scenario, a collaborator submits a poisoned version tag via GitHub Actions, loading /etc/shadow into a Pandas DataFrame for “analysis.”

Kedro boasts over 10,000 GitHub stars and powers workflows at companies handling petabytes. But data teams lag on deps—pipenv locks or conda envs gather dust. Snyk flagged this as high severity (CVSS likely 7.5+), yet without broad scanning, it persists. Similar issues plague DVC (another pipeline tool) in the past, underscoring sloppy path handling in Python’s data ecosystem.

Test it yourself on vulnerable versions (pre-1.3.0). Set up a dataset at local://example with versions dir. Then:

import kedro

catalog = kedro.io.DataCatalog.from_config(...)

df = catalog.load("example", version="../..//proc/version") # Escapes to /proc/version

print(df) # Prints kernel version, not dataset

This reads host files directly. In Dockerized runs, it breaches container boundaries if volumes mount host dirs.

Fix It Now

Upgrade to Kedro 1.3.0 or later:

$ pip install kedro>=1.3.0. The patch sanitizes versions by stripping .., separators, and absolutes, confining loads to the dataset root.

No upgrade? Workaround by validating inputs. Reject versions with re.match(r'[\\/..\s]', version) or similar. Wrap catalog loads in a sanitizer function. For CLI, parse --load-versions args server-side if automated.

Fair credit: QuantumBlack disclosed transparently via GitHub Advisory (GHSA-m4c5-4v5h-3f2q). Patch landed fast—days after discovery. But skepticism remains: Kedro’s CLI parsing invites abuse in scripts. Data tools need battle-tested utils like pathlib.Path().resolve().relative_to(base) from day one. Scan your deps with pip-audit or Snyk; this vuln exposes how “safe” frameworks falter on basics.

Bottom line: Patch today. In finance or crypto pipelines—where Kedro shines for reproducible flows—this blocks attackers turning version tags into backdoors. Your data integrity depends on it.