Datasette-llm-usage just hit version 0.2a0. Developers tracking LLM token consumption in SQLite now get cleaner separation of duties: this plugin strips out allowance checks and pricing estimates, pushing those to the new datasette-llm-accountant. It leans on datasette-llm for model configs, tightening the Datasette ecosystem’s LLM stack.

Why does this matter? LLMs burn cash—OpenAI’s GPT-4o charges $5 per million input tokens and $15 per million output as of mid-2024. Untracked usage spirals costs for apps hitting APIs like Anthropic or Grok. Logging tokens per query in a SQLite table lets you audit spends precisely, query trends, and cap runaway bills. This refactor makes it leaner: usage logging stays here, budgeting lives elsewhere.

Core Changes: Modular and Focused

The big cut: allowances and estimated pricing features vanished. No more built-in thresholds or cost projections. Use datasette-llm-accountant for that—pair them to enforce limits and forecast bills based on provider rates. This avoids bloat in a plugin meant for raw metering.

New dependency: datasette-llm handles model setup. Pull in provider details (API keys, models like “gpt-4o-mini”) from there. Issue #3 on GitHub drove this; it standardizes configs across Datasette’s LLM tools. Install via pip install datasette-llm-usage, then hook it into your Datasette instance with datasette install datasette-llm datasette-llm-usage.

Result? Your setup looks like this in datasette.json:

{

"plugins": {

"datasette-llm": {

"openai-api-key": "sk-..."

},

"datasette-llm-usage": {

"log_prompts": true

}

}

}Enhanced Logging: Prompts, Responses, and Tools

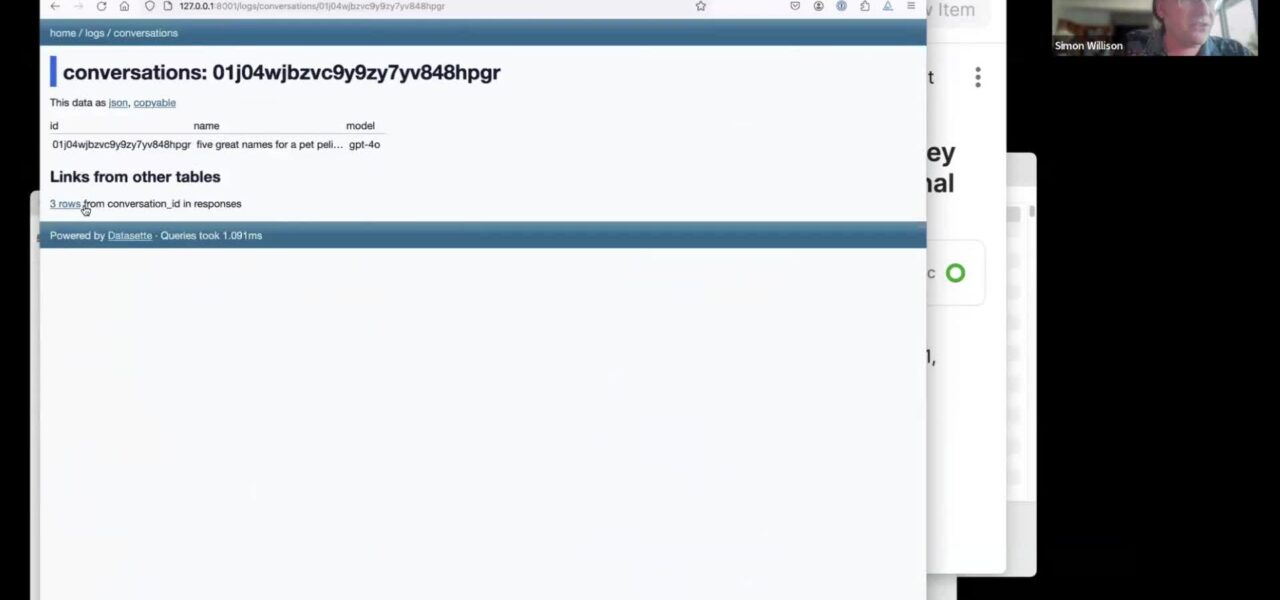

Flip datasette-llm-usage.log_prompts to true, and it dumps full prompts, responses, and tool calls into a new llm_usage_prompt_log table in Datasette’s internal database. Schema includes columns like id, timestamp, model, prompt, response, input_tokens, output_tokens, and tools_used.

Query it directly:

SELECT

model,

SUM(input_tokens) as total_input,

SUM(output_tokens) as total_output,

COUNT(*) as calls

FROM llm_usage_prompt_log

WHERE timestamp > datetime('now', '-7 days')

GROUP BY model;This beats vendor dashboards. Spot token hogs, debug verbose prompts, or analyze tool usage patterns. But watch privacy: full logs store sensitive data. Encrypt your SQLite file or purge regularly—SQLite isn’t Fort Knox.

Base usage still hits llm_usage table: path, model, input_tokens, output_tokens, created. Cumulative totals via SQL aggregates give daily/weekly burns.

UI Overhaul and Permissions

The /-/llm-usage-simple-prompt page got a redesign. Test prompts against configured models, see live token counts and responses. Now gated behind llm-usage-simple-prompt permission—set in Datasette’s actor system to lock it down for production.

Access via /-/llm-usage for tables, charts on usage. No frills, pure data: graphs of tokens over time, top models, per-endpoint breakdowns.

Implications for Builders

Datasette, Simon Willison’s SQLite-to-web powerhouse, excels at prototyping. This plugin slots into LLM-augmented apps—think faceted search boosted by GPT, or agentic tools calling APIs. Version 0.2a0 matures the stack: datasette-llm for inference, -usage for metering, -accountant for budgets.

Skeptical take: Alpha release (0.2a0) means bugs lurk. SQLite handles thousands of rows fine but chokes on millions without WAL mode or sharding. For high-traffic prod, export to Postgres or BigQuery. Still, for side projects or low-volume SaaS, it’s free, local, and zero-setup.

Costs add up fast. A chat app with 1,000 daily users averaging 2,000 input/1,000 output tokens per session? That’s ~$0.02/user/day on GPT-4o-mini. Unmonitored, it scales to $600/month unnoticed. This logs it transparently.

Security angle: Logging prompts risks PII leaks if your DB’s exposed. Datasette’s fine-grained auth helps, but audit access. In crypto/DeFi apps, where LLMs parse trades or risks, precise tracking prevents exploits via overages.

Grab it from GitHub or PyPI. Run datasette project myproject.db --plugins-dir=plugins --load-plugins=datasette-llm,datasette-llm-usage. Test a prompt, query the logs. You’ll see exactly where tokens vanish—and why your API bill spiked.