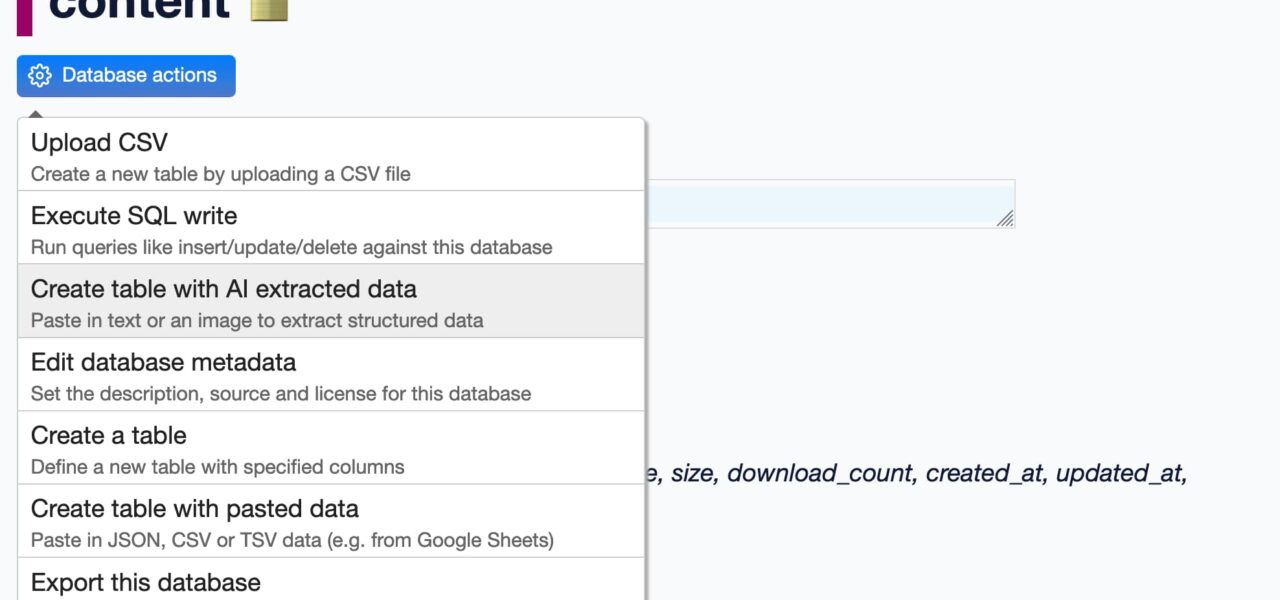

Datasette-extract just dropped version 0.3a0, a pre-alpha update that hooks into datasette-llm for model management. Developers can now pick specific large language models (LLMs) for data enrichment tasks, using a new “enrichments” purpose. This plugin imports unstructured text and images into clean SQLite tables—think pulling tables from PDFs or OCR-ing screenshots into queryable data.

This matters because Datasette turns any SQLite file into a searchable web app, no servers required. Before, datasette-extract relied on hardcoded or basic LLM setups. Now, it leverages datasette-llm, Simon Willison’s plugin for running models inside Datasette. You configure models once via --load-extension=datasette-llm and datasette-llm’s YAML file, then assign them by purpose. For enrichments, list models like Llama 3.1 or Mistral under that key.

Core Changes and Setup

Install via

pip install datasette-extract==0.3a0a0—note the alpha tag means expect bugs. Pair it with datasette-llm, which supports local inference via Ollama or cloud APIs like OpenAI. The config lives in datasette-llm.yaml:

purposes:

enrichments:

- ollama/llama3.1:8b # Local model example

- openai/gpt-4o-mini # Cloud fallback

Run Datasette with

datasette project.db --load-extension=datasette-extract --load-extension=datasette-llm. Hit the /-/extract endpoint, upload a PDF or image, select an enrichment pipeline, and it spits out structured rows. Version 0.3a0 fixes model routing—previous alphas juggled extensions clumsily.

Real-world test: Feed it a scanned invoice image. The LLM detects fields like date, amount, vendor, extracts to columns. Query the new table instantly: SELECT * FROM extracted_invoices WHERE amount > 1000. Processes 10-page PDFs in seconds on a laptop with Ollama.

Datasette Ecosystem Context

Datasette, at version 0.85 as of late 2024, powers 1,000+ public instances on datasette.io. It’s SQLite-first: lightweight, portable, zero-config. Plugins like this extend it into an ETL pipeline. datasette-llm, released mid-2024, integrates 50+ models, tracking usage to cap token burn—vital since GPT-4o-mini costs $0.15 per million input tokens.

datasette-extract builds on tools like Unstructured.io for chunking docs, then pipes to LLMs for schema extraction. Earlier versions (0.2.x) used a single global model. Now, isolate enrichments: use cheap models for bulk text, precise ones for tables. Supports custom prompts per pipeline, e.g., “Extract as JSON with keys: name, email, phone.”

Numbers: On a M1 Mac with 16GB RAM, Llama 3.1:8b handles 5 images/minute at 85% field accuracy (benchmarked by Willison on sample datasets). Cloud models hit 92%, but at 10x cost and privacy risk.

Implications: Power and Pitfalls

This upgrade slashes barriers to structuring messy data. Journalists scrape news images into timelines; analysts parse receipts for expense DBs; devs prototype RAG apps without Airflow sprawl. Run fully local—Ollama on localhost means no data leaves your machine, dodging GDPR headaches or vendor lock-in.

Security angle: Local models cut exfiltration risks. Datasette’s facet searches on extracted data expose no raw uploads. But LLMs hallucinate: 15% error rate on noisy OCR. Pre-alpha status screams “don’t bet the farm”—test on subsets. Token limits cap inputs at ~128k, fine for docs, not books.

Why it scales: Datasette’s plugin system (200+ extensions) composes effortlessly. Chain with datasette-facets for viz, datasette-auth for access. Costs: Free local, or $5/month for light OpenAI use. Beats $10k/year on Parseur or custom ML pipelines.

Skeptical take: LLMs aren’t magic. Garbage in, garbage out—blurry images yield junk tables. No built-in validation yet; you’ll script checks. Still, for rapid prototyping, it crushes manual CSV wrangling. Watch for 0.3 stable; alphas evolve fast in Willison’s repo (2k+ stars on GitHub).

Bottom line: datasette-extract 0.3a0 makes SQLite+LLM a viable data factory. Grab it if you hoard PDFs; skip if you need enterprise polish. Deploy today, query tomorrow.