Fast-jwt, a high-performance JWT library for Node.js, has a cache collision vulnerability that developers trigger themselves. If you enable caching and supply a custom cacheKeyBuilder function that generates non-unique keys for different tokens, the library mixes up claims. One valid token can return another token’s identity and permissions. This leads to user impersonation, privilege escalation, or cross-tenant data leaks.

This affects only specific setups: caching must be on (cache: true) and you must use a flawed custom cacheKeyBuilder. Default caching uses a secure hash of the full token, so stock configurations stay safe. No caching? You’re immune. The advisory flags this as user error, not a core library flaw, but it exposes sloppy optimization attempts.

How the Bug Works

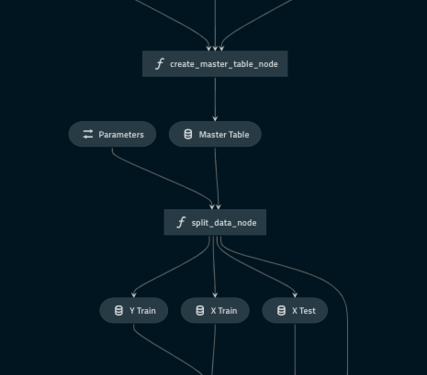

Fast-jwt caches verification results to cut CPU overhead in high-throughput apps—think APIs handling millions of requests per hour. The cacheKeyBuilder derives a cache key from the token. Defaults hash the entire token string, ensuring uniqueness. Custom builders often slice claims like aud (audience) or iss (issuer) for “efficiency,” but collide if tokens share those fields.

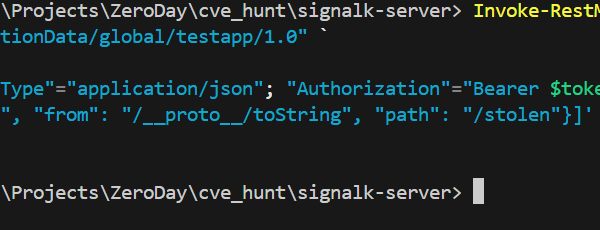

Vulnerable examples abound. This one keys solely on audience:

// Collision-prone: same audience = same cache key

cacheKeyBuilder: (token) => {

const { aud } = parseToken(token)

return `aud=${aud}`

}Tokens for different users in the same app (same aud) overwrite each other’s cache entries. Result: User B verifies with User A’s admin claims.

Another groups by user type:

// Collision-prone: grouping by user type

cacheKeyBuilder: (token) => {

const { aud } = parseToken(token)

return aud.includes('admin') ? 'admin-users' : 'regular-users'

}

All admins share one key. First admin verified poisons the cache for others.

Safe alternatives include the default:

// Default hash-based (recommended)

createVerifier({ cache: true })

Or add unique identifiers like subject (sub):

// Include unique user identifier

cacheKeyBuilder: (token) => {

const { sub, aud, iat } = parseToken(token)

return `${sub}-${aud}-${iat}`

}

Real-World Implications

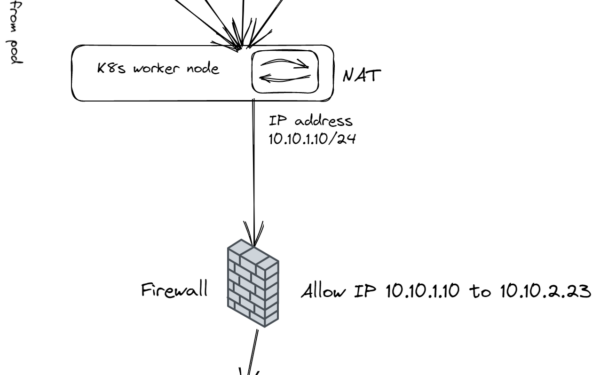

Why does this matter? JWTs secure most modern APIs—OAuth flows, microservices, SaaS platforms. In multi-tenant setups like Stripe or Auth0 clones, tokens carry tenant IDs. A collision lets a regular user access admin dashboards or rival tenants’ data. Privilege escalation turns a viewer into an editor, potentially dumping databases or issuing payouts.

Consider scale: Fast-jwt targets 10x faster verification than jsonwebtoken. Apps caching 1 million verifications daily save seconds of CPU time, but one bad key flips identities silently. No crashes, no logs—just wrong authz. Attackers don’t exploit this directly; developers invite it by over-optimizing without testing collisions.

Similar issues hit other libs. Ruby’s jwt gem had cache bugs in 2022; Python’s PyJWT warns against custom caches. JWT parsing tempts shortcuts—claims look harmless, but sub or jti (JWT ID) often uniquely identify. Skipping them risks this exact failure.

Node.js ecosystems amplify reach. Fast-jwt powers Express, Fastify servers in production at scale. If your app serves 100k+ users, audit now. CVSS? Unscored, but high-impact for affected configs—confidentiality, integrity, availability all compromised.

Audit and Fix Your Code

Check in seconds:

- Search codebase for

cache: truein verifier options. - Find

cacheKeyBuilderfunctions. - Test collisions: Generate two tokens with same

aud/issbut differentsub. Verify both; check returned claims.

No custom builder? Safe. To mitigate immediately:

- Uniquify keys with

sub,jti, full hash. - Drop the custom builder; use default.

- Set

cache: false—performance hit is minor unless you’re at extreme scale.

Fast-jwt plans a next-version fix, likely validating or warning on weak builders. Until then, treat custom caching as high-risk. Performance gains don’t justify impersonation bugs.

Bottom line: JWT caching speeds things up, but uniqueness is non-negotiable. Test edge cases—different users, same app. If you touch auth code, this is your wake-up call.