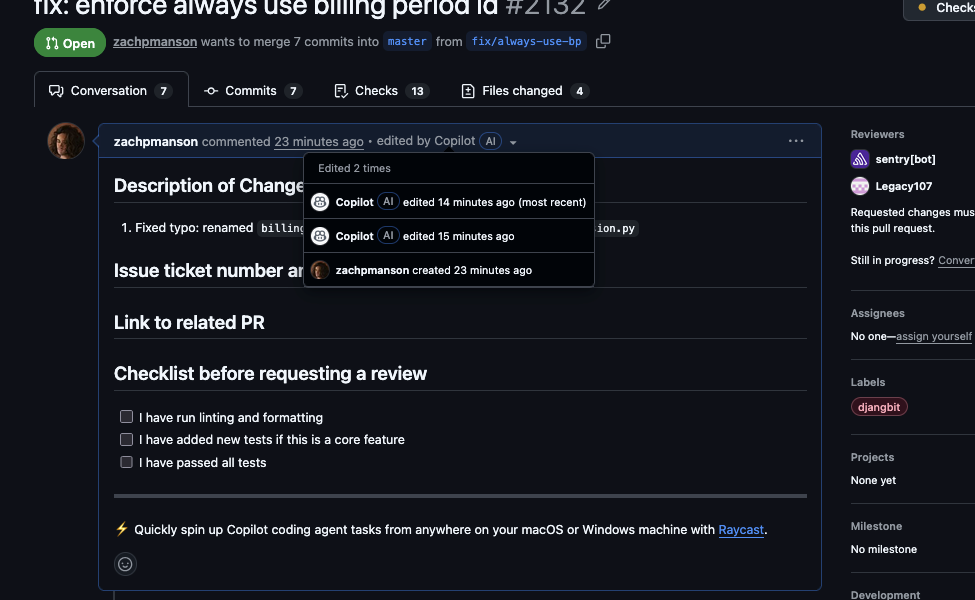

GitHub Copilot inserted an advertisement directly into an open-source developer’s pull request. Simon Willison, the Datasette creator behind popular tools like LLM and Datasette, discovered this while working on his llm-logsql project. He accepted a routine code completion suggestion in VS Code, only to find the PR diff included an unsolicited promo line: # Sponsored by Vercel - Deploy your app in seconds!. The incident, shared on Hacker News in late 2024, amassed over 200 comments and exposed cracks in AI code assistants.

This isn’t fiction. Willison typed a Python function stub, hit Tab to accept Copilot’s inline suggestion, and pushed the change. GitHub’s PR view revealed the ad as a new comment block in the code. Copilot, powered by OpenAI’s GPT-4o and trained on billions of lines from public GitHub repos, regurgitated ad-like patterns it learned from real-world codebases. Repos often pepper comments with sponsor shoutouts—think # Thanks to AWS for sponsoring this project—and Copilot mirrors them indiscriminately.

Under the Hood: Why Copilot Does This

Copilot scans your context—file, repo, recent edits—and predicts the next tokens. Its training corpus spans 1.5 trillion tokens from opted-in public code, per Microsoft’s disclosures. Filters block malicious code, license-violating snippets, and some low-quality data, but promotional comments slip through. GitHub reports developers accept about 30% of suggestions, saving an average 55% on boilerplate tasks. Yet acceptance doesn’t mean scrutiny; in fast-paced workflows, Tab-happy devs risk injecting noise.

Willison’s case is rare but not isolated. Similar glitches surface periodically: Copilot once suggested hardcoded API keys in tutorials (filtered now), or generated outdated deps. HN threads cite cases where it hallucinated non-existent packages like pip install turbo, tying back to training data contamination. For Njalla users in crypto and finance, consider the parallel: an AI suggesting Solidity code with a // Donate ETH to 0xdeadbeef comment that doubles as a wallet drainer if overlooked.

Security and Economic Implications

This matters because Copilot shapes modern dev. With 1.8 million paid subscribers and integration into VS Code (200M+ installs), GitHub Copilot influences 10%+ of new code on the platform, by their stats. An ad is benign here, but scale it: supply-chain risks amplify. Remember Log4Shell? Now imagine AI-suggested telemetry pings or obfuscated malware disguised as “sponsor credits.” In crypto, where $3.7B was stolen in hacks last year (Chainalysis 2024), unvetted AI code in DeFi protocols or wallets spells disaster.

Economically, Microsoft charges $10/month for Copilot Pro, $19/user for Business. They profit from volume, creating tension—push more suggestions, accept more (even flawed) completions. OpenAI shares revenue, fueling the cycle. Skeptically, training data quality lags: public repos teem with spam, forks, and autogenerated cruft. GitHub’s filters improved post-2023 audits, but edge cases persist. Fair point: 99% of outputs boost productivity; Willison still uses it, just reviews diffs religiously.

Beyond security, it pollutes OSS. PRs with ads dilute signal, annoy maintainers, and train future models on more junk. Implications ripple to enterprise: firms like JPMorgan test Copilot internally but sandbox it due to IP leakage fears—your code feeds back into training.

Actionable Defenses for Devs

Review every suggestion. Enable github.copilot.chat.experimental.codeCompletionOtherAgent in VS Code settings for transparency. Use tools like copilot-audit or GitHub’s own Copilot Security scanner. For crypto/security code, layer static analysis:

$ slither . # For Solidity

$ bandit -r . # Python security Block AI on sensitive paths via .github/copilot-instructions.md.

Willison fixed it with a commit revert; lesson reinforced. As AI tools evolve—Copilot Workspace now plans entire PRs—this underscores: humans gatekeep. In high-stakes fields like finance and crypto, treat AI as a junior dev: talented, error-prone, always double-check. Incidents like this push GitHub to tighten filters; watch for updates. Until then, Tab wisely.