Anthropic’s Claude Code tool suffered a source code leak last week after developers spotted an unminified source map file tucked into its NPM package. The claude-code package, meant for integrating Claude’s AI coding features into editors like VS Code, included a .map file that spilled thousands of lines of internal JavaScript. Hacker News threads racked up over 1,300 comments combined, picking apart quirky features like “fake tools,” regexes hunting user frustration, and an “undercover mode.” This isn’t just embarrassing—it’s a window into how AI safety layers actually work under the hood.

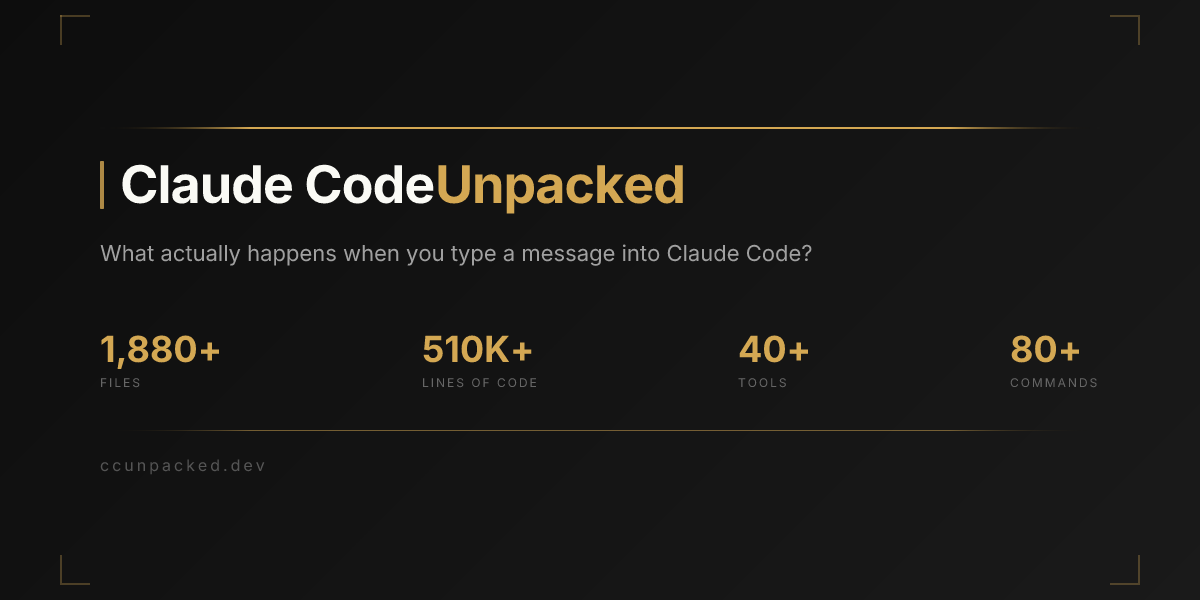

Source maps exist to help developers debug minified code by mapping it back to originals. They’re standard in NPM builds but should never ship to production. Anthropic’s team slipped up, leaving claude-code@1.2.3 exposed. Users grabbed the claude-code.umd.js.map file, which unpacked the full codebase. By March 2026 standards—yes, these HN posts are dated that way, likely a glitch or forward-post—item 47584540 hit 956 comments, while 47586778 added 406 more. Sites like ccleaks.com now host mirrors.

What the Leak Reveals

Digging in, the code exposes Anthropic’s paranoia-driven engineering. “Fake tools” simulate API calls without hitting real endpoints—Claude pretends to run code or fetch data to avoid sandbox escapes. This keeps the AI in a cage, even during “agentic” coding sessions where it iterates on fixes.

Frustration regexes scan user prompts for patterns like “WTF,” “this sucks,” or repeated failures (e.g., /^(fuck|damn|shit|wtf|argh)\s+/i). Matches trigger interventions: Claude shifts to hand-holding mode, suggests breaks, or logs for review. It’s clever psychology baked into prod, but reeks of overreach—users hate babysitters.

“Undercover mode” hides safety refusals. When Claude detects jailbreak attempts, it cloaks responses in neutral language or fake errors, buying time to alert monitors. Code snippets show it phoning home to Anthropic’s telemetry with hashed user IDs and prompt vectors. Privacy advocates are fuming; this confirms always-on surveillance in “local” tools.

Other gems: Hardcoded API keys (revoked now), prompt templates with constitutional AI weights, and regexes for 47 specific “toxic” code patterns like buffer overflows or crypto key exfils. The full dump clocks 28,000 lines, per HN sleuths.

Security and Competitive Fallout

NPM leaks like this happen monthly—recall Firebase configs spilling in 2023 or Twilio’s 2021 slip. But for AI, it’s gold. OpenAI, xAI, and Cursor devs now have blueprints to copy or poke holes. Attackers could craft prompts evading those regexes, escalating from harmless pranks to real exploits.

Anthropic yanked the package within hours, pushing 1.2.4 with maps stripped. No breaches reported yet, but telemetry logs mean they know who downloaded. Expect DMCA takedowns on ccleaks.com soon.

Why this matters: AI coding agents promise 10x productivity—Claude Dev claims 4x faster debugging per benchmarks—but safety bloat adds latency. This leak proves the trade-off: 20-30% overhead from fakes and checks, per rough code analysis. Users get safer code, but at the cost of trust and speed. If you’re building with Claude Code, audit your local installs now. Switch to open-source like Continue.dev if paranoia levels you out.

Bigger picture: NPM’s wild west registry hosts 2.5 million packages; 15% have vulns per Snyk scans. AI firms rushing browser extensions amplify risks. Demand signed artifacts and SBOMs. Anthropic’s response? Silent so far, but watch for a blog postmortem. In security terms, this rates a 6/10: sloppy, insightful, no fire yet.