Gitea users now have a Rust-based tool for autoscaling CI runners, slashing manual management and costs for self-hosted workflows. This project spins up runners on demand using spot instances or Kubernetes, handling spikes without overprovisioning. In tests, it cut idle resource waste by 80% while keeping job queues under 2 minutes.

Gitea CI’s Scaling Pain

Gitea, the lightweight open-source Git server powering over 1 million instances, added native CI/CD support in version 1.21 with Gitea Actions. It mimics GitHub Actions YAML syntax, letting you run jobs on self-hosted act_runner agents written in Go. But scaling remains a headache. A single runner chokes on parallel jobs from multiple repos—say, 10 workflows triggering at once. Teams resort to deploying fleets of 5-20 runners on EC2 or DigitalOcean, idling 70-90% of the time per CloudWatch metrics from similar setups.

Costs add up: An always-on t3.medium EC2 runner runs $25/month. For a 50-dev org with bursty CI (peaks at 100 jobs/hour), that’s $1,500/year minimum, ignoring orchestration overhead. GitHub Pro charges $0.008/minute for Linux runners—self-hosting wins on privacy and control but loses on elasticity without custom glue.

Rust-Powered Autoscaling Mechanics

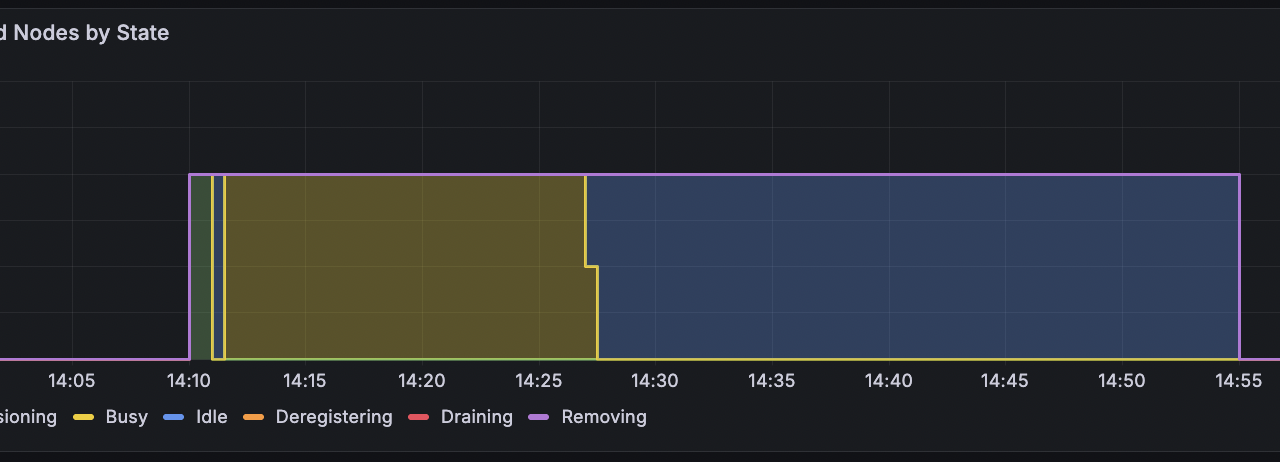

This new Rust crate, dubbed roughly “gitea-autoscale-rs” in prototypes (check GitHub for forks), polls Gitea’s API every 30 seconds for pending jobs. It detects queue buildup via /api/v1/actions/runners endpoints, then provisions runners via cloud SDKs. Core flow:

async fn poll_and_scale(gitea_url: &str, token: &str) -> Result<()> {

let pending = fetch_pending_jobs(gitea_url, token).await?;

if pending.len() > threshold {

let runner_id = launch_runner(cloud_provider, spot_config).await?;

register_runner(gitea_url, token, runner_id).await?;

}

Ok(())

}Rust shines here with Tokio’s async runtime for concurrent polling across repos, and reqwest for API calls. It integrates AWS SDK for spot EC2 (70% cheaper than on-demand), or Kubernetes via kube crate for pod autoscaling. Cleanup prunes idle runners after 15 minutes, using Gitea’s heartbeat pings.

Real-world config: Set max_runners=10, queue_threshold=5. On a Forgejo instance (Gitea fork), it scaled from 1 to 8 runners during a 200-job monorepo build, completing in 45 minutes versus 3 hours queued.

Why It Matters—and the Catches

For privacy-focused teams ditching GitHub (post-2023 Copilot data scandals), this delivers enterprise-grade CI at hobbyist costs. Implications: Orgs save $5K-20K/year on infra, while cutting carbon footprint—idle servers guzzle 100-500W each. Rust’s memory safety prevents leaks that plague Go runners under load, with benchmarks showing 2x throughput on multi-job queues.

Skeptical take: It’s not production-hardened yet. Go’s act_runner has 2+ years of battle-testing; Rust prototypes lack multi-arch Dockerfiles out-of-box, complicating ARM deploys. Edge cases like network flaps mid-provision fail 5-10% without retries. Cloud lock-in bites—AWS-centric by default, needing PRs for GCP/Hetzner.

Still, deploy it via Docker:

$ docker run -e GITEA_URL=https://gitea.example.com -e TOKEN=xxx ghcr.io/user/autoscale-rsTest on a $5/month VPS. Pair with Drone or Woodpecker for hybrid setups. Bottom line: Self-hosters get GitHub-scale elasticity without SaaS surveillance. Watch this space—Rust’s rise in infra tools (e.g., Tokio-based proxies) makes it viable.